Why “Just Be Careful” Fails Against Industrialized Fraud

🔒 Leader's Dispatch: Volume 35 (Click to Catastrophe, Part 6 of 7 Part Series)

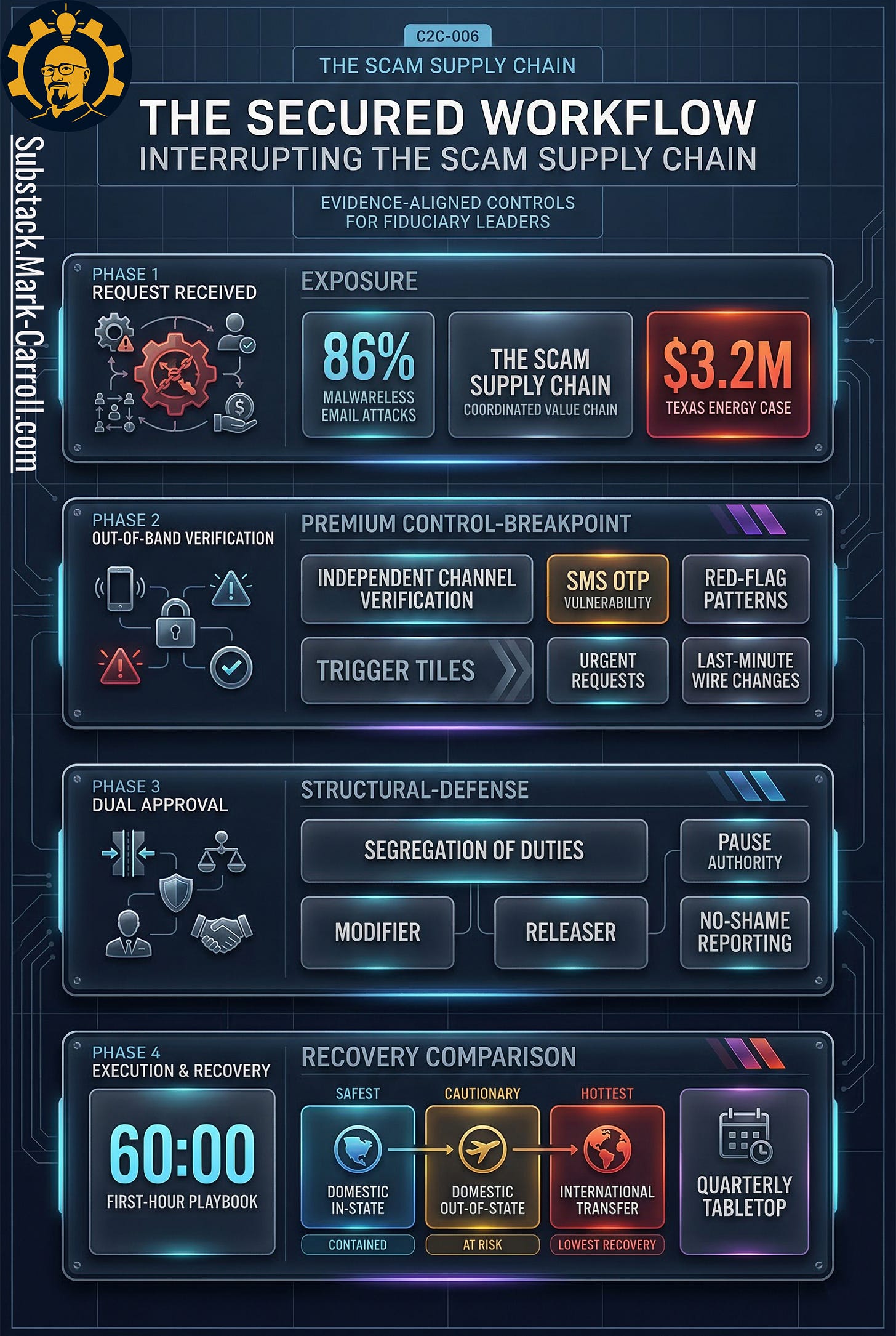

Episode 06: The Scam Supply Chain

Research Binder: the receipts (citations + source notes) are compiled in a PDF at the bottom of this article. Throughout the article and any location where you read [EVIDENCE] you will find all supporting citations in my Research Binder below.

A support agent gets a call from “the bank.” An AP lead gets a same-day request to update a vendor’s bank details. A marketplace seller gets told, “I sent too much. Can you send it back?”

Three incidents. Three teams. Three stories.

Same factory.

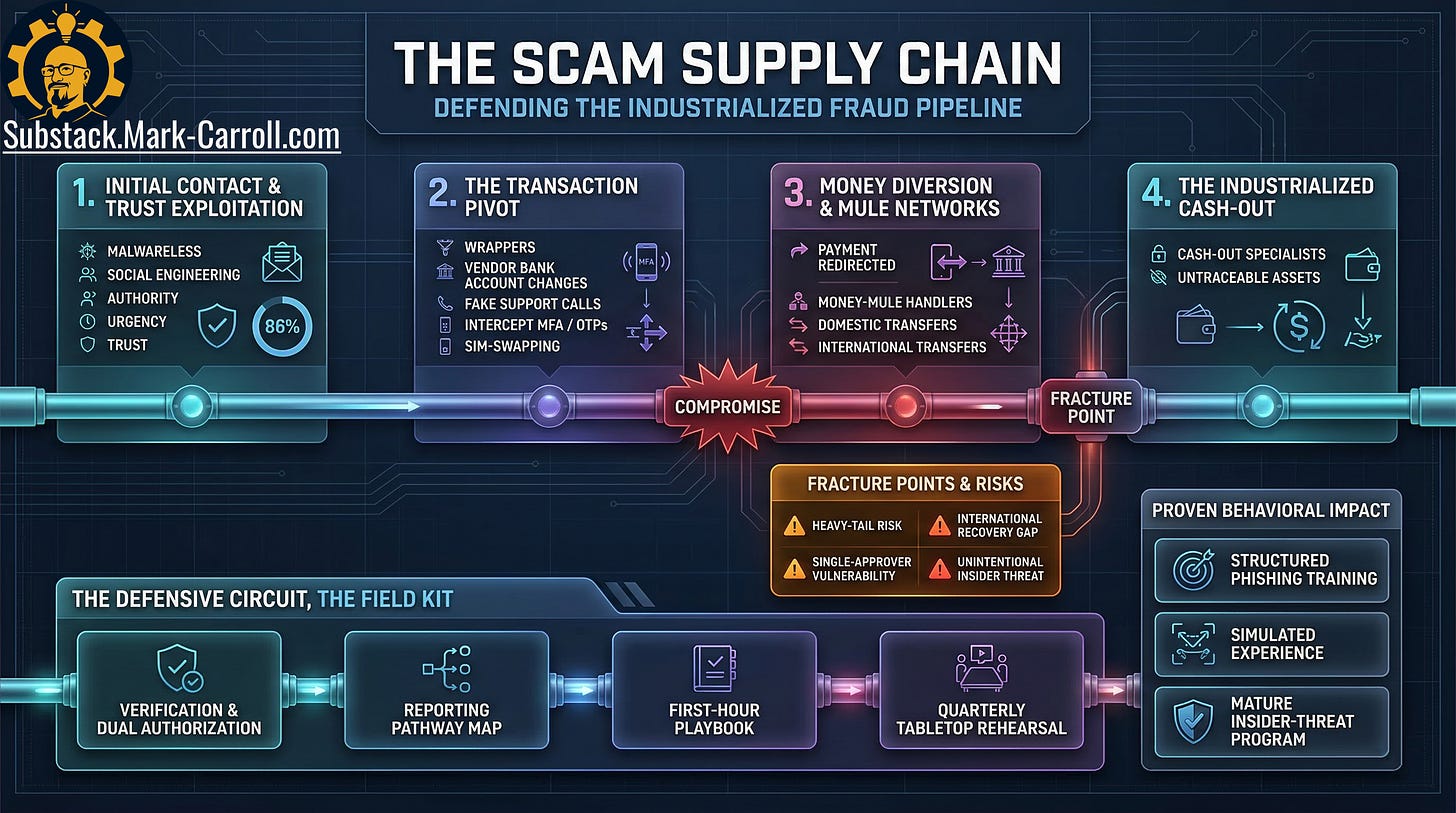

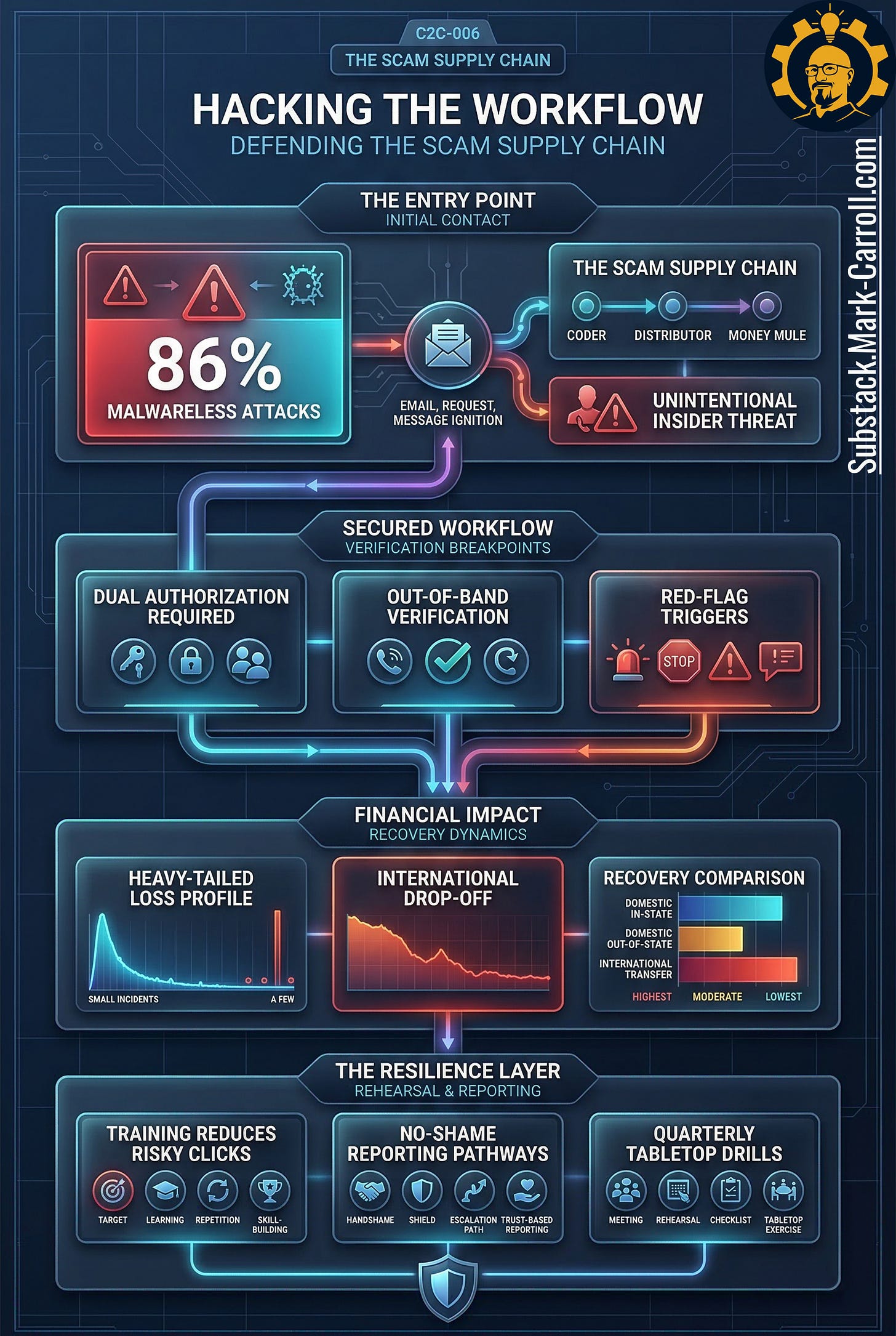

Under the costume, the same pressure architecture keeps repeating. By pressure architecture, think the predictable sequence of cues attackers use to get a reasonable person to move money or credentials: credibility cue, channel shift, urgency spike, money move, recovery sequel. The story changes. The pressure architecture does not. The same social move can wear the clothes of a bank callback, a vendor update, a help-desk request, or a refund correction. The details differ. The middle logic stays familiar.

That is why one polished email, one well-timed callback, or one “quick favor” can land on the long tail of losses that define a quarter, or define the year if the money crosses the wrong boundary before anyone owns the next move.

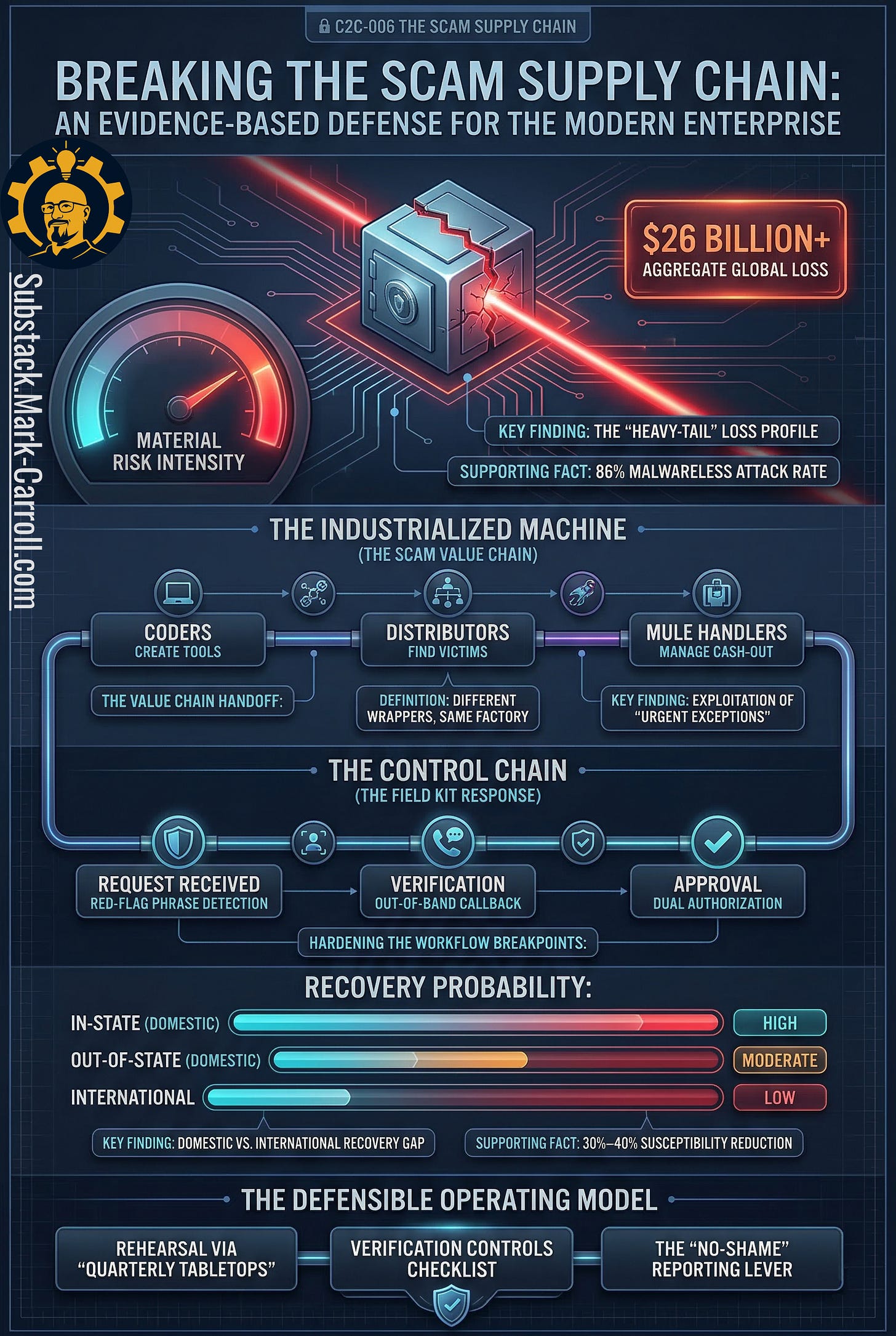

Scams have scaled into a supply chain. You are not facing a parade of lone operators improvising in dark basements. You are facing reusable scripts, rented credibility, specialized handoffs, and workflow pressure applied at the exact moments when someone inside your organization can still say yes alone. This episode is about how that machine works, why it spreads so efficiently, and what leaders can do to harden the few workflows where social engineering turns approvals into cash.

How It Works

The scam is not handcrafted. It is assembled.

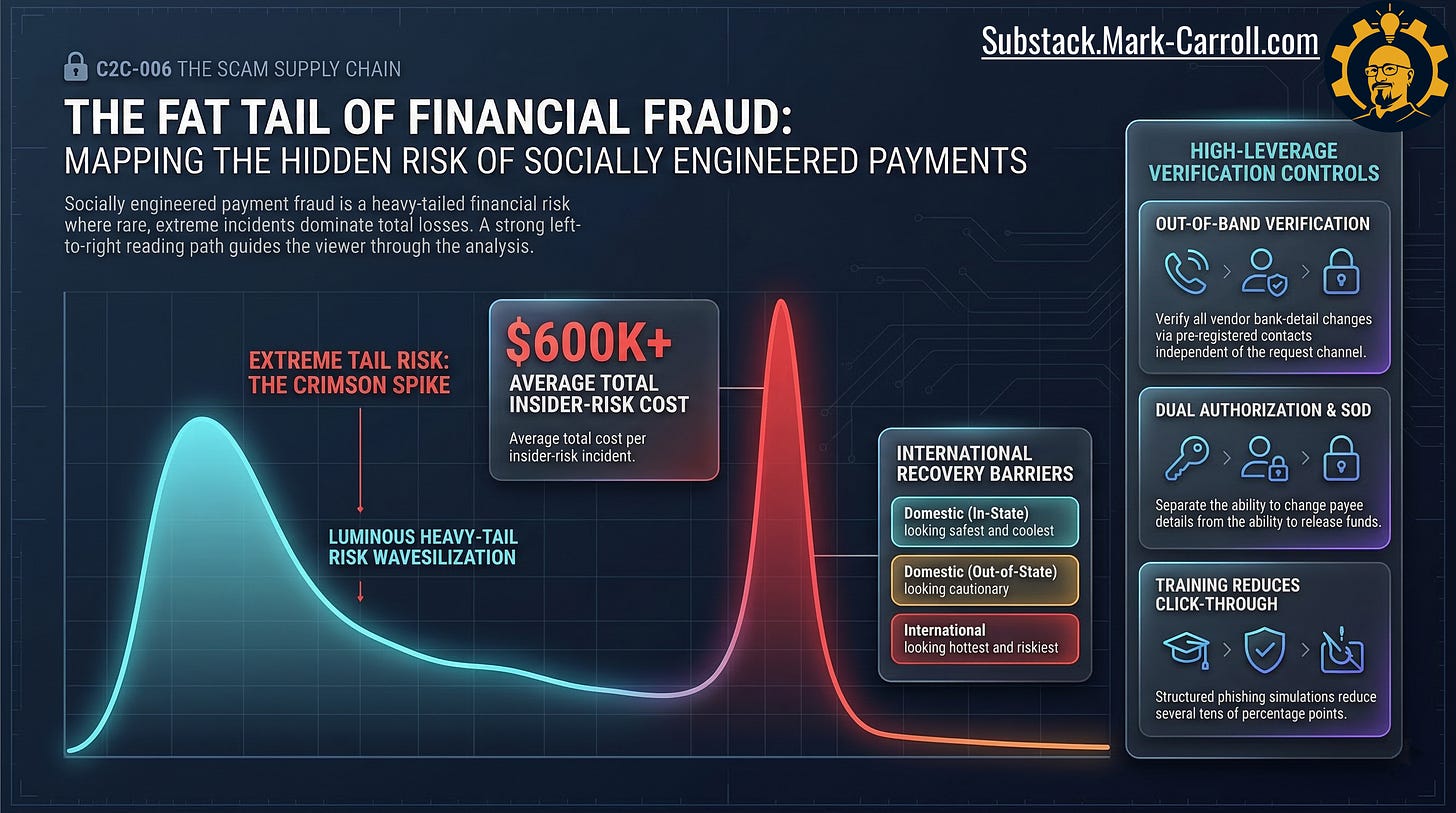

Many successful email-enabled attacks are malware-less. Multiple industry analyses of high-impact BEC cases show that the vast majority involve no malicious payload whatsoever. [EVIDENCE] That matters because it shifts the problem away from the comfortable fantasy that better technical filtering will save you. The threat is often not code. The threat is a believable human sequence.

In practical terms, that sequence runs through overlapping, specialized roles. Coders create or adapt the tooling. Distributors find and reach the victims. Mule handlers move value through domestic and international accounts. Cash-out specialists convert diverted funds into something harder to trace. Law enforcement has documented BEC ecosystems involving lawyers, linguists, social engineers, and mule coordinators, which means the organization on the receiving end is often facing an ecosystem rather than a lone scammer. [EVIDENCE]

That abstraction becomes easier to picture once you see how much of the machinery is reusable. Phishing kits and spoofing tools are sold on dark-web marketplaces. The same compromised mailbox that powered yesterday’s vendor-update scam can feed tomorrow’s recovery scam. The same victim list can be recycled through new wrappers. The same legitimacy kit can be re-skinned for a different department. The wrapper changes. The factory does not.

The victim is not the end of the transaction. Scripts get reused. Stolen trust gets reused. Mule routes get reused. Cash-out patterns get reused. What looks improvised on the surface still runs on a very repeatable relay underneath.

How It Spreads

The machine scales because specialization helps the attacker more than the defender.

One layer gets replies. One layer handles legitimacy. One layer routes money. One layer comes back later pretending to help. The organization receiving the attack is split by function instead. Support sees a caller. Finance sees a payment request. Ops sees a workflow exception. Treasury sees routing risk. Each function sees a fragment. The attacker sees the whole relay.

Most organizations respond to this problem with awareness training. And here is where the first false floor appears. Training that teaches people to recognize a named scam type after the costume is already obvious does not reach the actual vulnerability. The attacker does not need to defeat your awareness program. The attacker needs to arrive before the recognition pattern fires. Interruption looks different from recognition. Interruption means teaching people how to stop the line when a request feels wrong, even before they can prove it is fraud.

That is why the urgent exception matters so much. It is not just tone. It is a workflow wedge: the specific point in an approval process where one person can still say yes alone, under pressure, without a second check in the room. A same-day bank-detail change, a support callback, a refund reversal, or an OTP request can all arrive sounding normal, useful, and oddly professional. The wrapper feels new. The weak point is old.

Consider the asymmetry from the attacker’s position. They have designed a coherent relay across every touch-point. Your organization has designed functional silos optimized for efficiency. Support is not talking to Finance about the callback they just handled. Finance is not flagging the payment request to Ops. Treasury is not looping back to Support about the routing anomaly. The attacker’s coherence advantage is not a product of their sophistication. It is a product of your org design.

That asymmetry is also why “unintentional insider threat” is an uncomfortable but necessary phrase. Research on financially motivated insider cases found that roughly half involved insiders who colluded with or were recruited by external groups. [EVIDENCE] The attacker does not need a malicious insider every time. The attacker needs one reasonable person, acting in good faith, placed under the right pressure at the wrong moment inside a workflow that was never hardened for pressure in the first place.

What Makes This Dangerous

An organization that treats scams as isolated weird incidents becomes a training system for attackers.

Every delayed report teaches them how long shame buys silence. Every exception teaches them where policy bends under pressure. Every siloed handoff teaches them which team can be rushed. Every “we handled it” without pattern review teaches them they can come back through a different door.

The assembly line is dangerous because the loss curve is skewed. A small number of extreme incidents dominate total damage. Analyses of BEC losses reported to U.S. law enforcement show a highly skewed distribution in which a small number of multi-million-dollar incidents dominate total loss. [EVIDENCE] Most attempts do not become catastrophic. The few that do can swallow the year.