The Sprint Stayed Green, The Budget Didn't

Profit With Proof | Episode 1

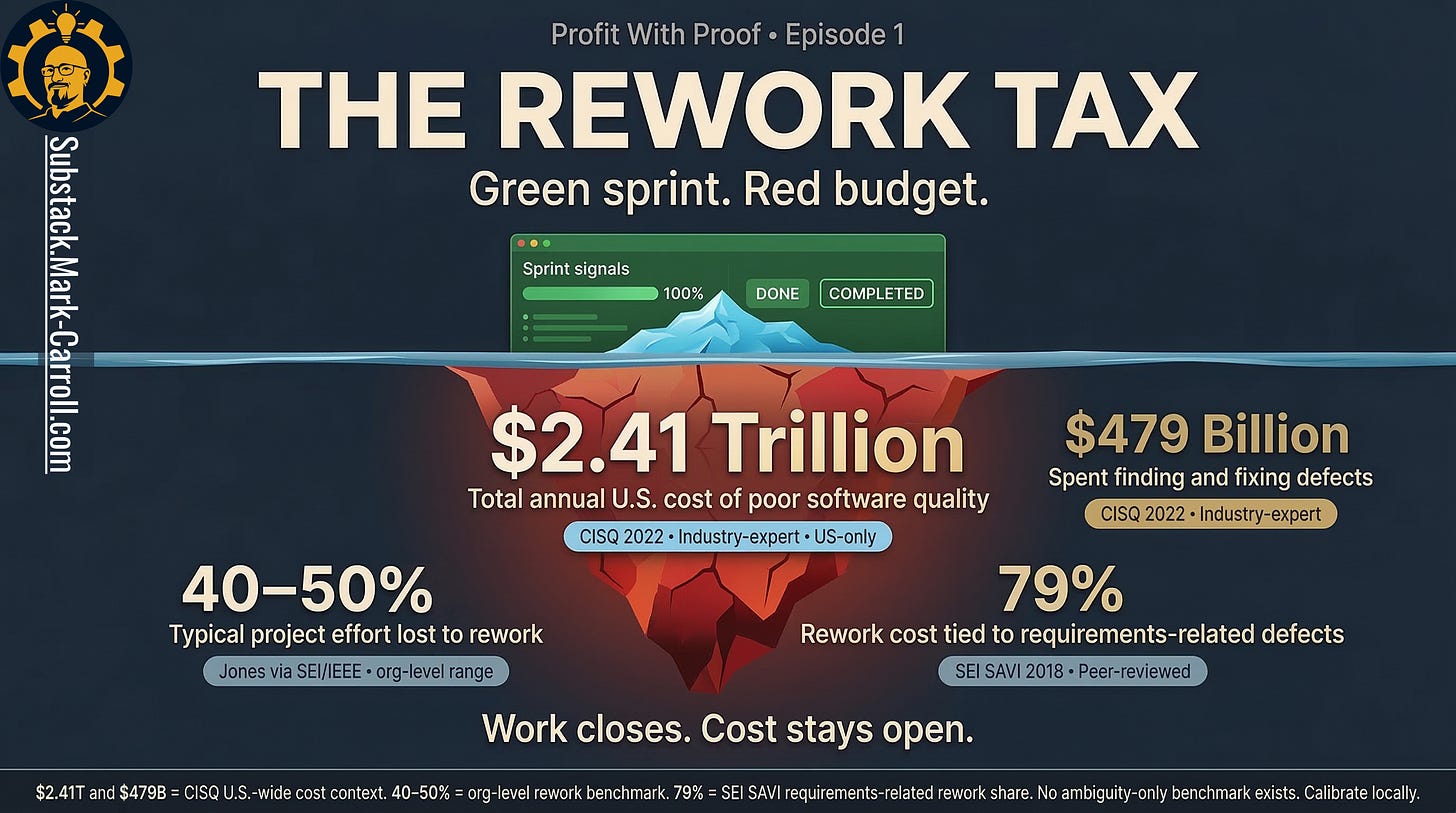

The Rework Tax

How ambiguity at the start of a sprint becomes a margin problem at the end of a quarter

The sprint closed. The feature shipped. The budget did not agree.

Research Binder: the receipts (citations + source notes) are compiled in a PDF at the bottom of this article.

Here is how to price what your metrics are hiding.

A product manager writes the story in forty-five minutes because the planning meeting is already running hot and nobody wants to be the person who slows the room down. The acceptance criteria look finished enough to survive the scroll test. Engineering reads them one way. QA reads them another. Nobody is confused enough to stop the sprint.

That is the dangerous part. Everybody sees just enough clarity to keep moving.

By Friday, the ticket is closed. By Monday, a follow-on ticket is open. Nobody logs it as rework. Nobody calls it duplicate labor. Nobody says out loud that the same feature is now billing the organization twice. The sprint velocity stays green. The budget does not.

Most teams treat this as a collaboration annoyance. A miscommunication. A little friction between functions. That reading is too small. What just happened was not a failure to align. It was hidden cost entering the system through a door your metrics do not watch.

That is the rework tax.

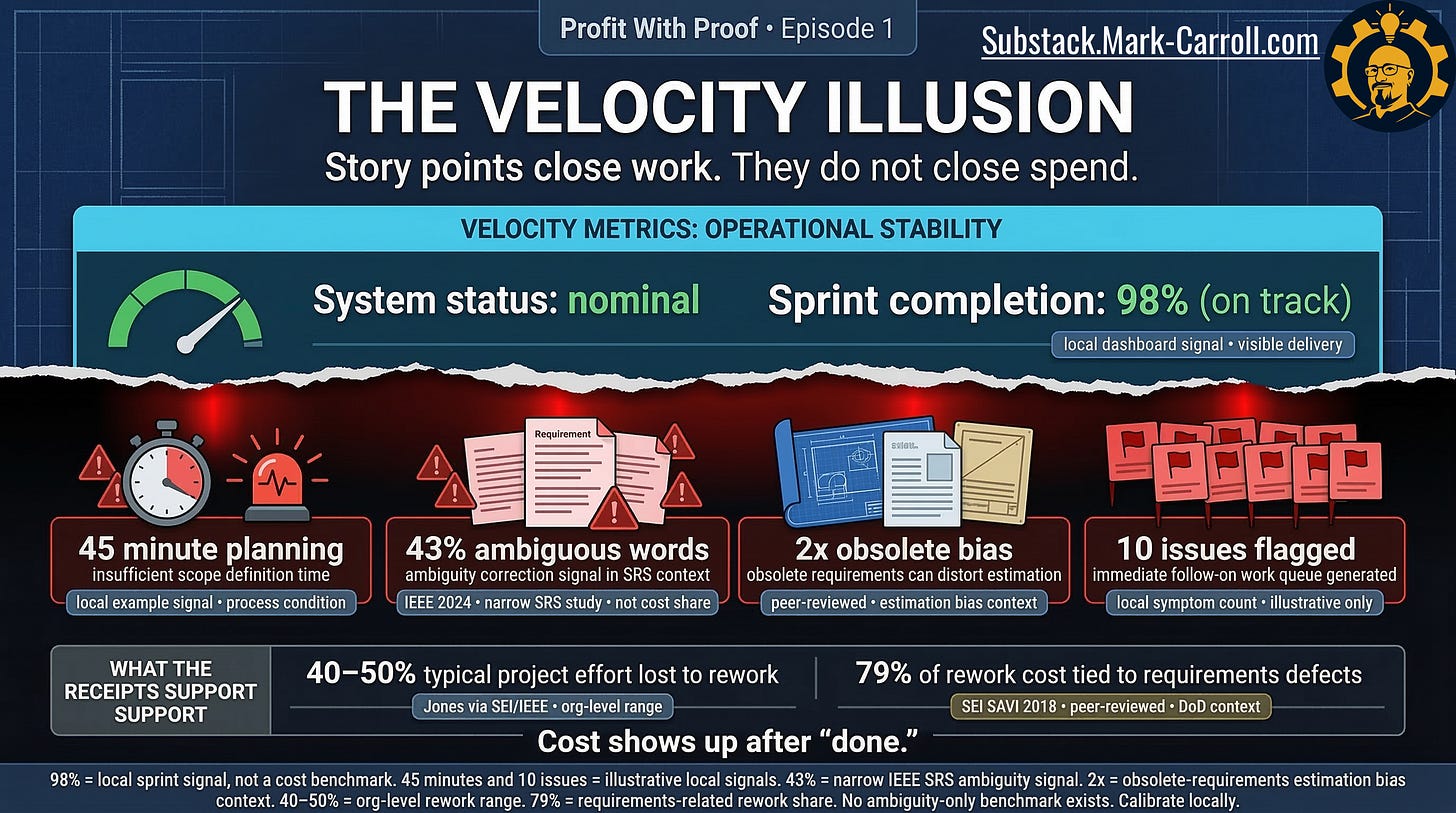

The measurement trap

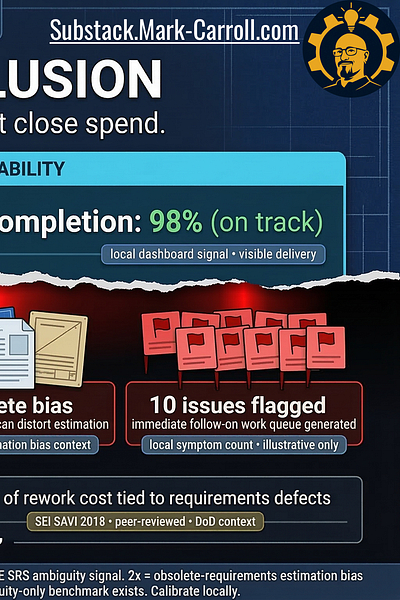

The first trap is not incompetence. It is measurement. Story points measure closure. Velocity measures throughput. Neither tells you whether the work was right the first time. That sounds obvious when you say it slowly. It becomes much less obvious once a dashboard goes green and the meeting ends.

The system has already decided what counts as progress, and what does not.

A green dashboard is operationally comforting because it gives everyone something clean to point at. Tickets moved. Commitments held. Sprint intact. The board can breathe. Leadership can move on. The team can say it shipped. Nobody has to ask the more expensive question, which is whether the shipped work matched the intended work closely enough to avoid paying for it again.

That omitted question is where the tax lives.

What the dashboard reports is closure. What the business still pays for is everything closure failed to prevent: hidden spend, follow-on tickets, reopened work, quiet capacity loss, engineers fixing the same shape twice under a new label. The dashboard is not lying. It is omitting the bill.

And that omission matters, because the reopen is not where the cost starts. The reopen is where the cost finally becomes visible.

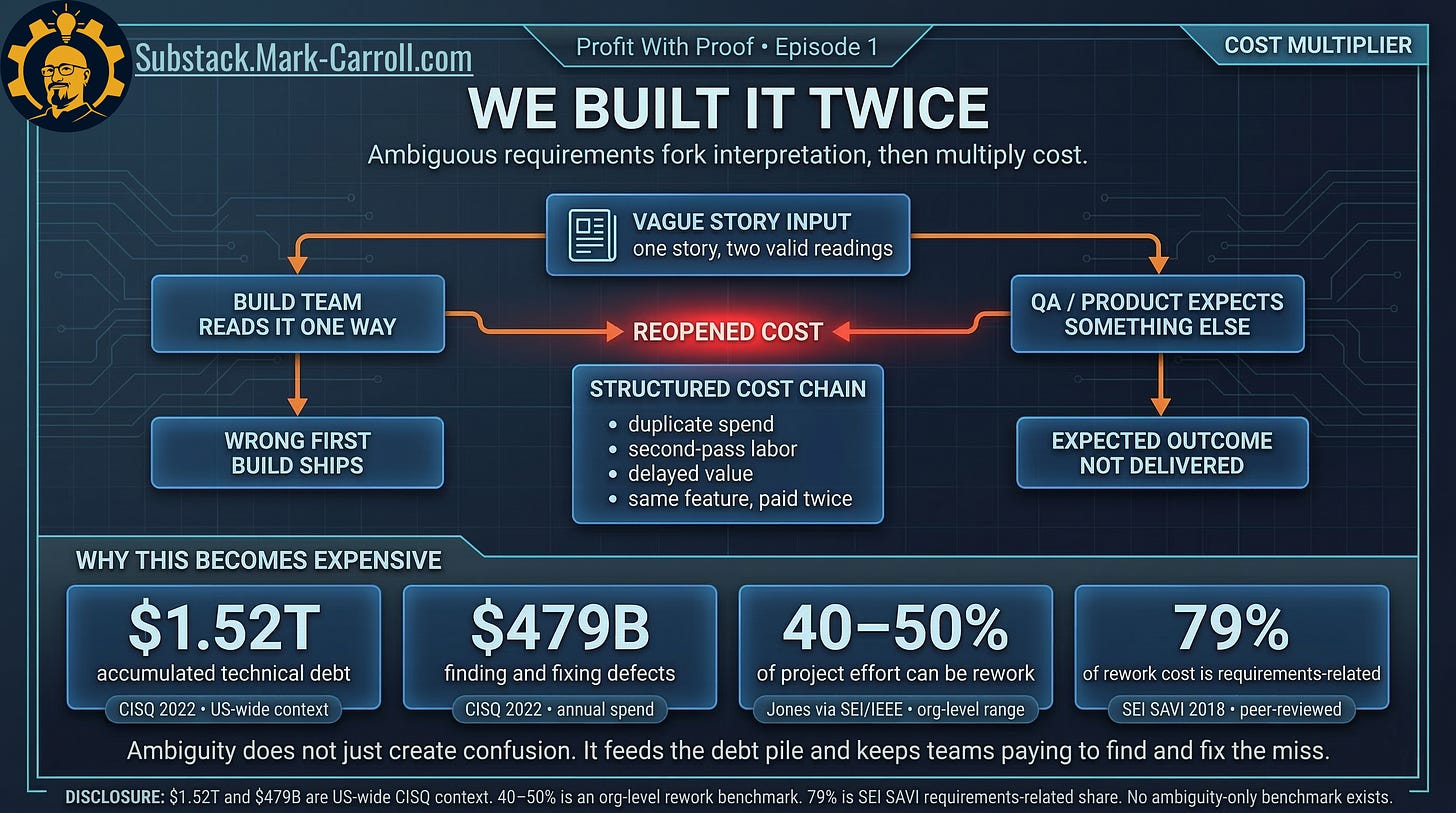

Same feature. Second bill.

One story with two valid readings creates two operational realities. One team builds what it believes was requested. Another verifies against what it expected to receive. Both teams can be competent. Both can act in good faith. That does not lower the invoice. It increases it.

The first build still consumed planning time, engineering time, review time, and QA time. Once the story reopens, the organization is not buying new value. It is paying again for value it thought it already purchased.

Same feature. Second bill.

That is why rework feels so corrosive. It is not only expensive. It is insulting. Teams are not just asked to do more work. They are asked to repeat work the system already counted as done. The emotional damage is real. But the financial damage is the part leaders keep missing. Second-pass labor is still labor. Delayed value is still delayed value. A closed story that comes back wearing a different name is still the same money leak.

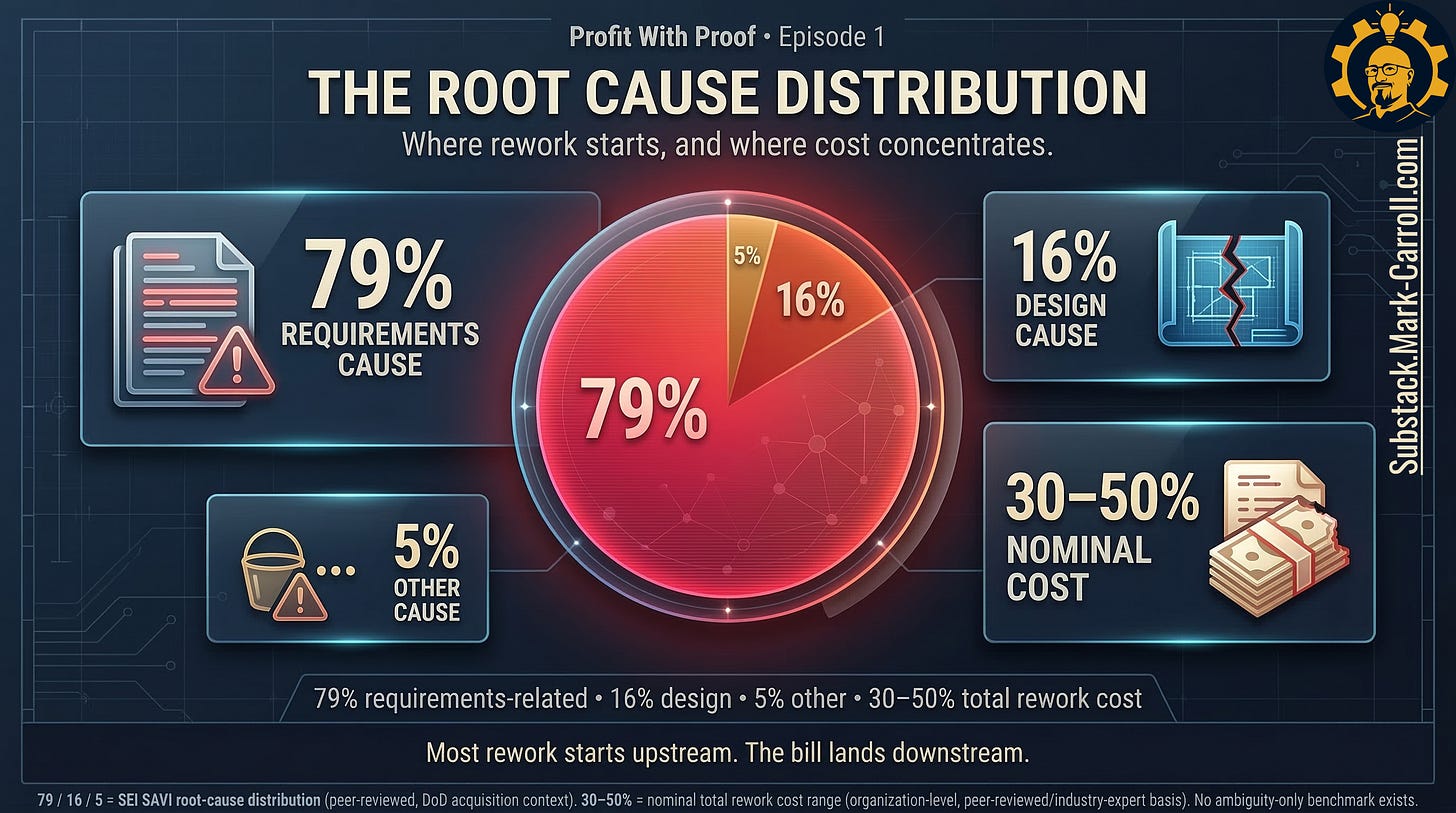

Where the pile-up actually comes from

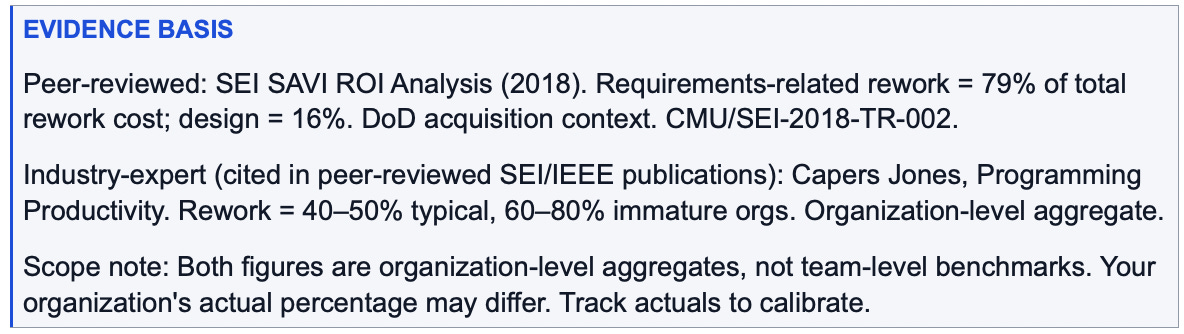

If rework were evenly distributed across all causes, the answer would be a broad call for better discipline everywhere. That is not what the evidence suggests. The pattern is more concentrated than that.

Research and industry benchmark data consistently show that requirements-related defects own an outsized share of total rework cost. A peer-reviewed SEI technical report on DoD acquisition systems found that requirements-related rework accounted for 79% of total rework cost, with design defects accounting for 16% and other sources for the remainder. Separately, industry-expert benchmark data from Capers Jones, cited across multiple peer-reviewed SEI and IEEE publications, estimates that typical organizations spend 40 to 50% of total project effort reworking defects in requirements, design, and code. Immature organizations may reach 60 to 80%.

That is what changes the argument. Requirements discipline is not process theater. It is an economic choke point. Design still matters. Other sources still exist. But when one source bucket is this lopsided, the response cannot stay generic.

How delay multiplies the bill

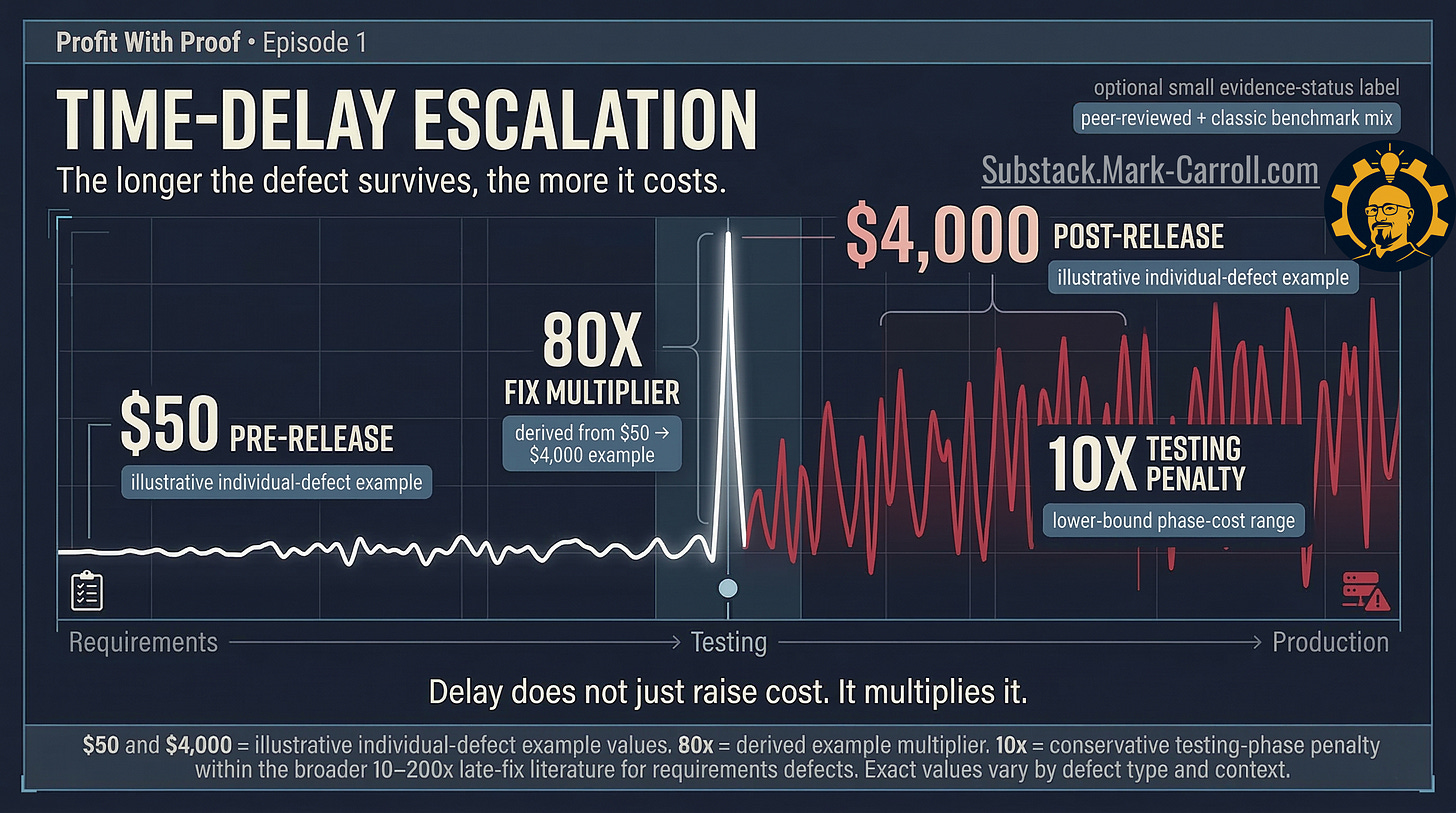

The problem gets worse because delay changes the economics. A miss caught early is still annoying. A miss caught late is punitive. The same misunderstanding moves through different cost states as it survives longer.

Classic peer-reviewed research across multiple studies, including Boehm (1988) and McConnell (2001), places the cost multiplier for fixing a requirements defect at production versus catching it in the requirements phase at 10 to 200 times. The IBM Systems Sciences Institute placed it at 15 times. The SEI SAVI analysis independently confirmed a range of one to two orders of magnitude. The specific multiplier varies by organization and defect type, but the direction is consistent across decades of evidence: what felt like “we can fix it later” at planning time becomes a different class of expense by testing, and another one again by production.

The defect did not merely survive. It appreciated.

“Fix it later” sounds like sequencing. In practice, it is borrowing against future focus, future budget, and future credibility. By the time a requirements miss travels through testing, release, and production support, the cost is no longer just technical. It becomes coordination drag, support burden, schedule disruption, and management attention nobody budgeted to spend.

Delay does not just extend the problem. It multiplies the bill.

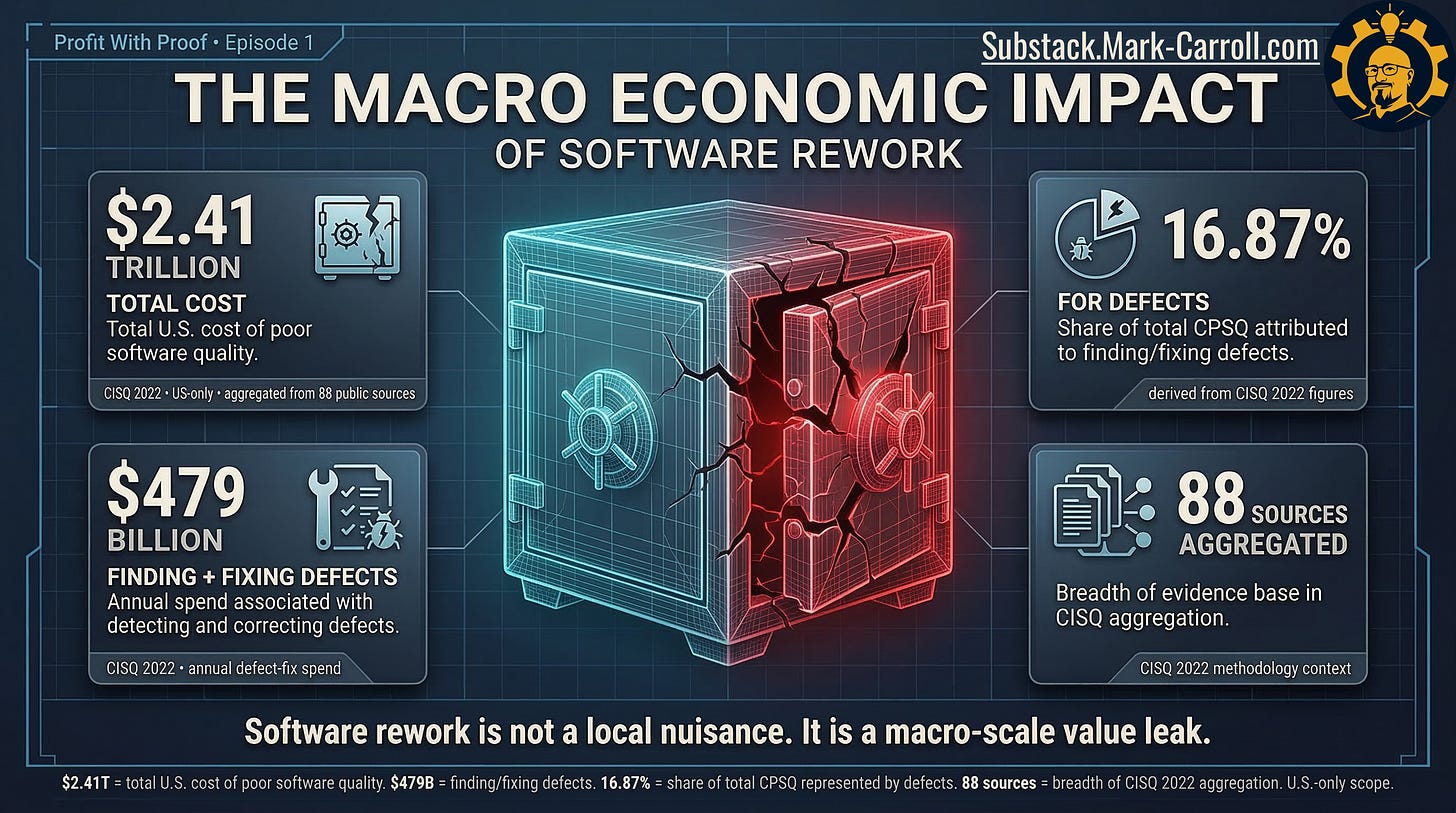

At small scale, a reopened story looks like a team problem. At actual scale, it belongs to a pattern that operates across the industry. Industry-expert benchmark data from the Consortium for Information and Software Quality (CISQ 2022 Cost of Poor Software Quality report, US-only, aggregated from 88 public sources) estimates the total US cost of poor software quality at $2.41 trillion, with finding and fixing defects accounting for $479 billion of that figure. Your Monday morning reopen is one data point in that pattern. One hidden ticket does not look like a national problem. Thousands of those tickets, spread across organizations that measure delivery better than correctness, absolutely do.

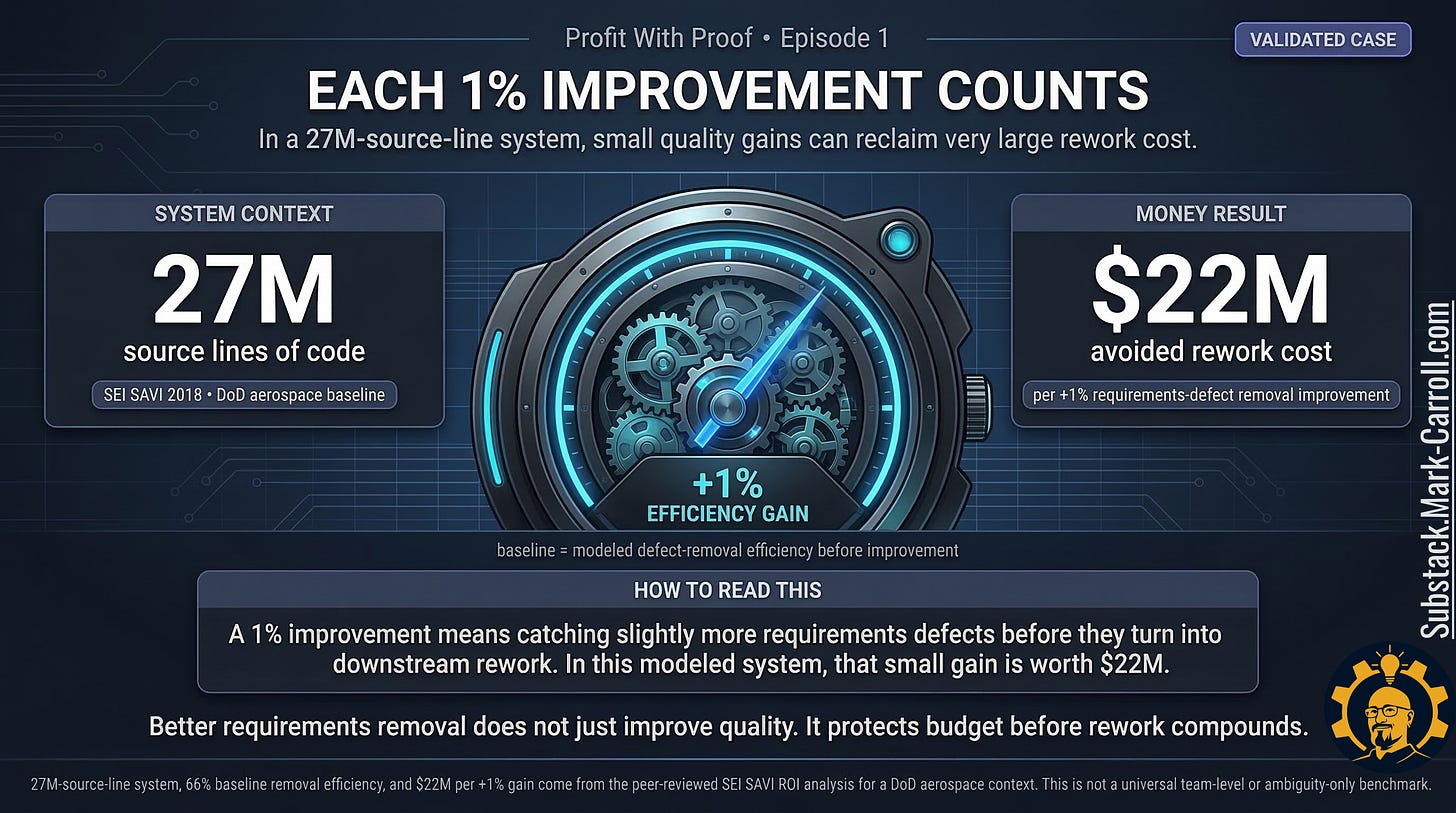

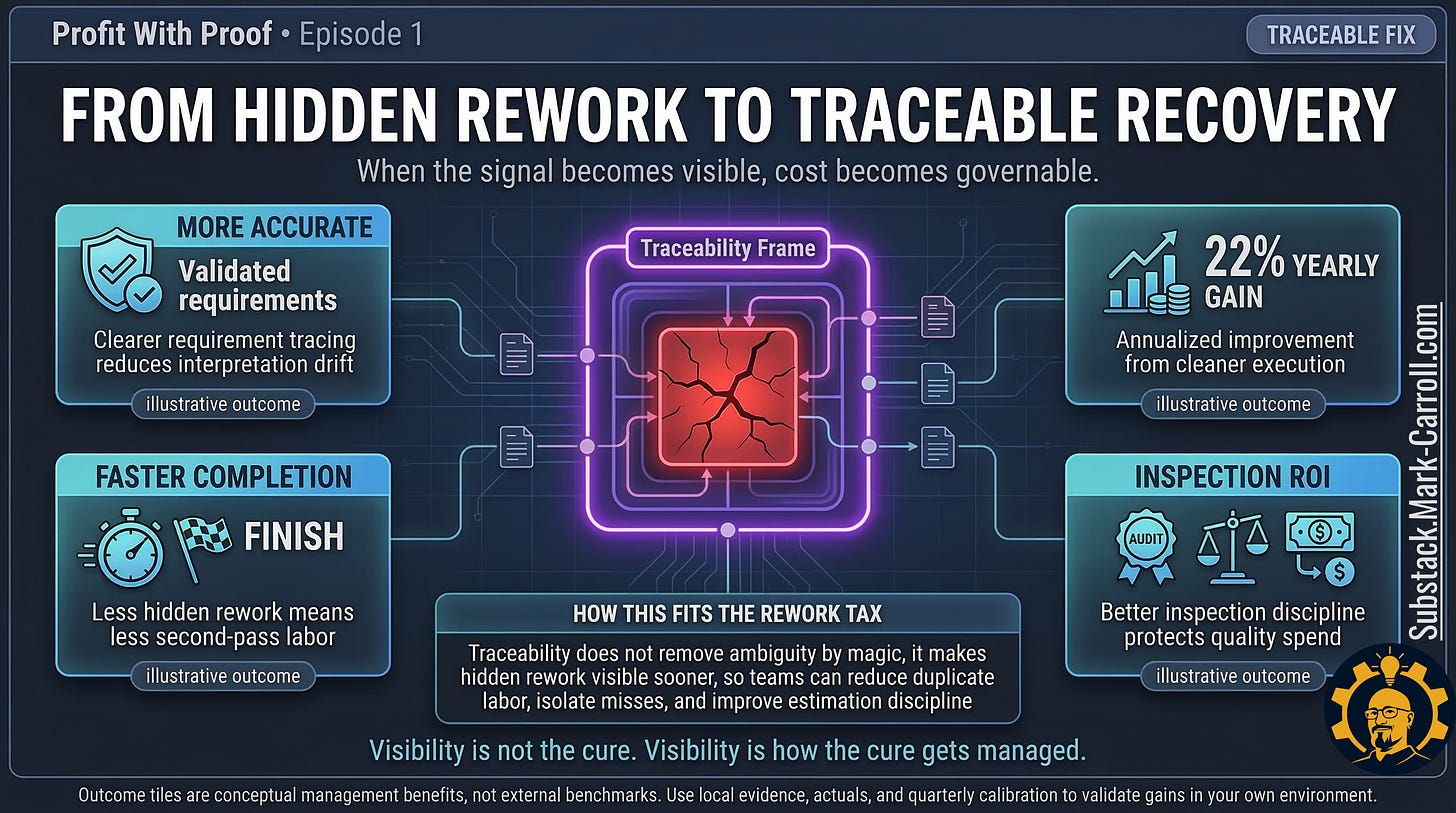

Why small upstream gains are worth more than they look

The good news is not that the problem is small. It is not. The good news is that small upstream gains can be worth more than they look. A peer-reviewed SEI study of DoD aerospace systems (CMU/SEI-2018-TR-002) found that each 1% increase in requirements defect removal efficiency saved $22 million for a 27-million-source-line-of-code system, with savings scaling linearly with system size and total rework percentage. That is a specific finding from a specific context, and you should calibrate to your own system size and baseline. But the directional principle it encodes is robust across decades of evidence: catching requirements problems before they travel downstream recovers real money, not promised money.

Not magical money. Not guaranteed ROI. Real avoided rework that never had to be funded in the first place. The appropriate posture for any claim in this territory is transparency and defensibility, not guaranteed outcomes. That is exactly the posture this article takes.

The right question is not “Can we prove the universal exact cost of ambiguity?” That is the wrong standard. No benchmark exists for ambiguity specifically. The better question is: “Can we bound our local exposure well enough to change the next decision?”

That is where this article turns from diagnosis to capability.

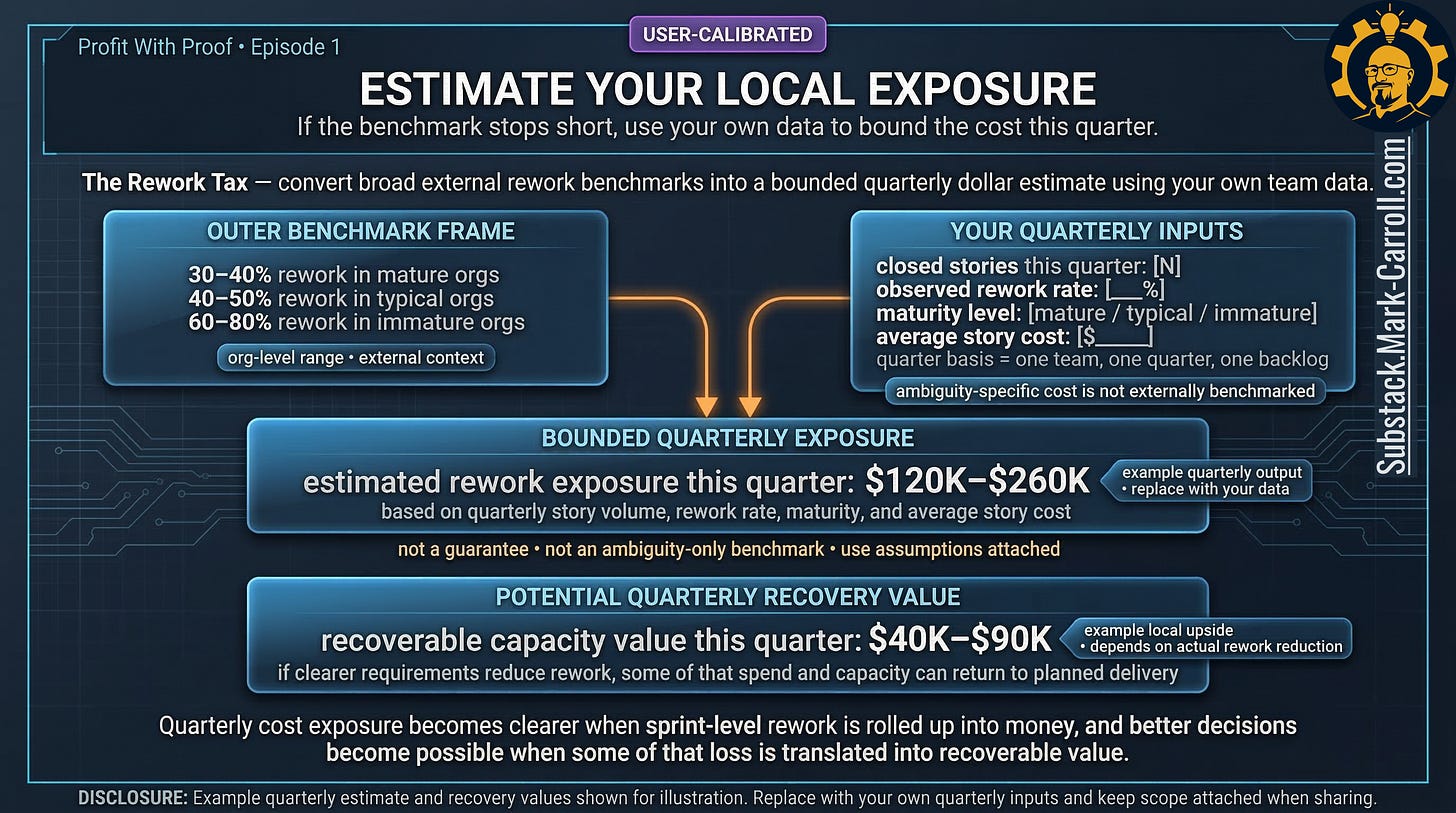

How to bound your own rework tax

You do not need an ambiguity-only benchmark to make a better call this quarter. You need a structured way to price the part of the problem you can already see. Here is a one-quarter playbook you can run with a spreadsheet.

Start with one quarter of closed stories. Pull the full list. Estimate or observe how many reopened, spun off follow-on work, or had to be rebuilt because the original interpretation failed to match the intended outcome. For each of those, rough-classify the root cause: requirements or acceptance criteria ambiguity, design or implementation error, external dependency, or other.

Estimate an average story cost using your own numbers: typical engineer, PM, and QA daily cost multiplied by average days per story. Approximate is fine. You are not building a courtroom exhibit. You are building a decision-grade range you can bring into a budget conversation without apologizing for it.

Then multiply: the number of “built it twice” stories, times your average story cost, times the share you estimate belongs in the requirements or acceptance criteria bucket. This gives you a local, bounded estimate of the rework tax for one quarter. It will not be precise. It will be better than zero.

Then do the part most teams skip. Track actuals against the estimate next quarter. Add a lightweight “rework?” tag to your tickets. Add a short root-cause field to closed rework items. Check periodically: what percentage of sprint effort went to rework, how many of those issues were catchable in requirements, and how that compares to the range you estimated. If the estimate was wrong, good. Now you are learning in public instead of guessing in private. If the number feels uncomfortably large, even better. That means the hidden cost has finally become discuss-able.

Turning visibility into usable capacity

The point is not only to name what rework is costing you. The point is to show what clearer requirements could recover in usable delivery capacity. If your bounded estimate says one quarter of hidden rework is eating somewhere between $X and $Y, that range changes the room. It gives engineering a financial language. It gives product a defensible tradeoff frame. It gives finance a route back to the sprint where the signal first appeared.

If you cannot prove the exact tax, bound it well enough that nobody in the room can keep pretending it is zero.

The whole problem started with a story that looked finished because the system was built to reward the feeling of done. That is why the fix is not motivational. It is structural. Better requirements quality. Better visibility. Better local pricing of rework before it compounds into something the quarter has to absorb.

This is the first argument in Profit With Proof. The next one goes deeper into what happens when teams delay naming the miss at all. The Escalation Delay Cost is about the price of deferring bad news, and why the bill gets uglier the longer a problem survives in silence.

Work closes. Cost stays open. Until someone prices it.

That is also the larger case behind my upcoming book, Collaborate Better. Better collaboration is not about being nicer in meetings. It is about reducing avoidable friction before it turns into waste, delay, and preventable cost. You can learn more at CollaborateBetter.us.

P.S. If you do not know what percentage of your last thirty days of sprint effort went to rework, that is not a data inconvenience. That is the finding. What will you do with it?

Empathy Engine | Substack.Mark-Carroll.com | Evidence-Forward Product Leadership

Previous:

More Content to Discover:

The most dangerous cost in software is rarely the one finance can see right away.

It is the one hidden inside “done” work. The reopened ticket. The follow-on fix. The second pass nobody logs as rework because the dashboard already moved on.

This piece is my attempt to name that leak in plain financial terms.

If one part hit home, tell me which one:

the green dashboard problem,

the duplicate labor bill,

the delay multiplier,

or the local exposure estimate.

And if you have ever watched a team celebrate Friday progress and quietly pay for it again on Monday, I want to hear that too.