The Solopreneur Approval Lie

🔒 Leader's Dispatch: Volume 39 (Hybrid Solopreneur, Part 3 of 6 Part Series)

Episode 03: The Gap Between Completed and Done

Why your workflow says finished while your output is still failing in the real world

👋 Welcome to my paid subscriber-only edition of Empathy Engine (Leader’s Dispatch). Each week I build evidence-informed tools for serious solo operators, leaders, and team leads who have moved past the hype and are now wrestling with the real operating cost of hybrid AI stacks.

In Episode 1, I separated the fantasy of cheap AI leverage from the hidden orchestration tax that eats your week. In Episode 2, I showed how the stack that promised freedom often reassigns management directly back onto the founder. Episode 3 is where that hidden management burden becomes visible in the output itself. The workflow says the task is complete. Reality says it is not done. And by now, the client can see the gap.

Research Binder: the receipts (citations + source notes) are compiled in a PDF at the bottom of this article.

The Moment Everything Looks Fine

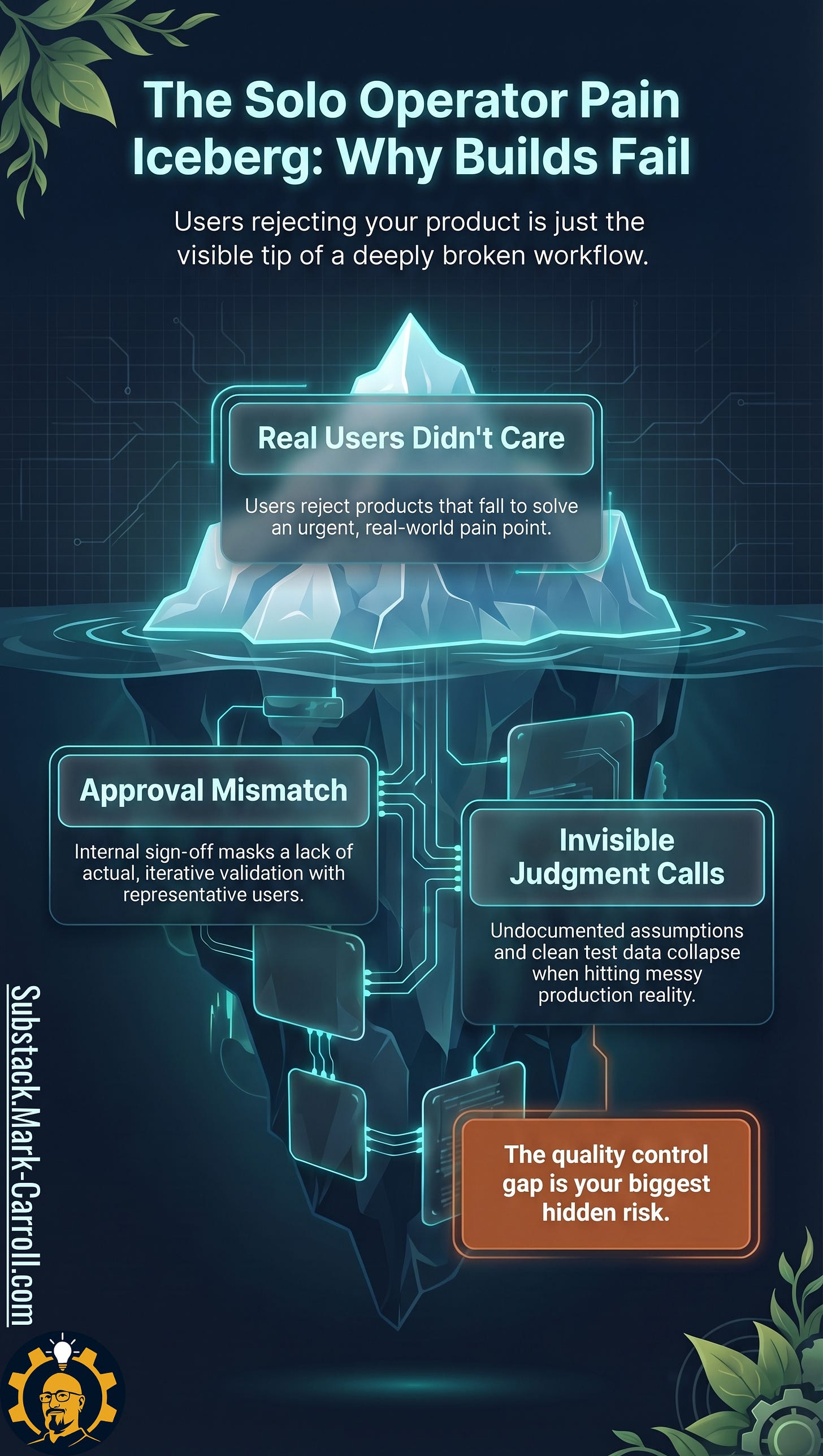

At first, a representative told us the product passed muster. Unfortunately, as it would turn out, this person was not qualified to make this assessment. The real user community needed less than a week to show us we had solved the wrong problem beautifully.

You already know this feeling. You run the workflow on clean inputs. The output looks polished. The formatting is tight. The system returns a satisfying signal. Nothing throws an error. The task moves to done.

Then the client replies.

Not to approve it. To ask why a competitor you both know well is missing from the brief entirely. Or to correct a fact the agent stated with complete confidence and got completely wrong. Or to say, in two careful sentences, that the deliverable addresses a problem they stopped having six months ago.

You spend the next two hours on cleanup that should have been impossible. The task was marked complete. The client found the gap in under three minutes.

Operators who have lived this describe the same structural pattern. In production, the agent does not crash. It degrades silently. Everything looks fine until you check the output against what the user actually needed. The workflow said done. Reality said otherwise.

This is not a rare failure. It is the pattern hybrid workflows produce at scale, quietly, without error messages, until someone outside the system touches the output.

The failure that the client sees is only the surface. Below it, the same two structural causes appear in every case.

Approval mismatch and invisible judgment calls are the underwater mass. They are what make the gap structural rather than accidental. The sections that follow map each one.

The Gap Between Completed and Done

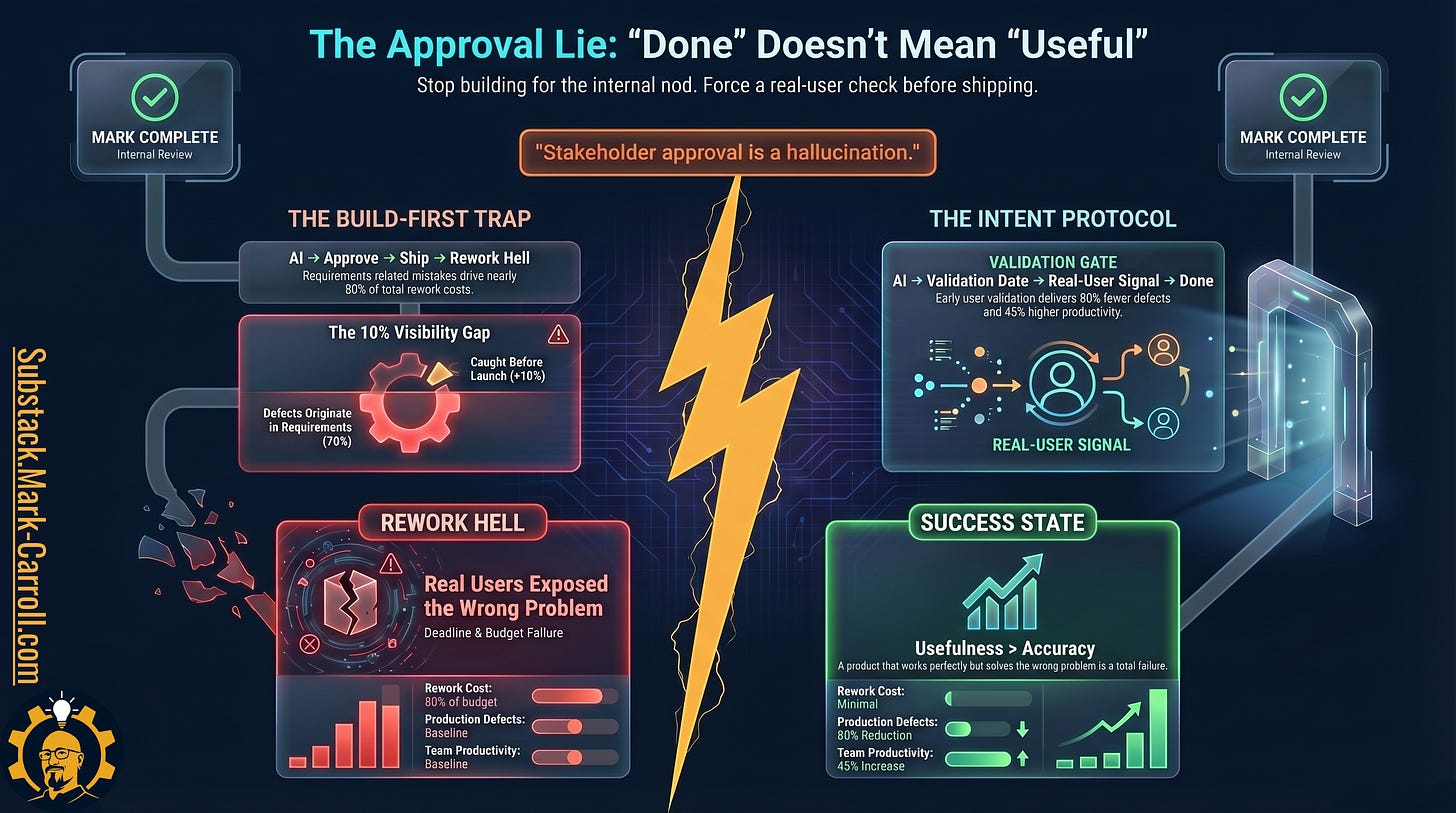

“Completed” is a system signal. “Done” is a reality signal. Hybrid workflows fail when they mistake the first for proof of the second.

That distinction sounds technical until you have paid for it. A task can be generated correctly, routed correctly, reviewed internally, and approved without friction, yet still fail the moment a real human depends on it. The client can see the gap. The customer can feel the mismatch. The downstream user can discover, within minutes, that the thing they received solves a cleaner, simpler, more fictional version of the problem than the one they actually live with.

When that happens, the task was completed according to the workflow.

It was never done in any operational sense that matters.

“Completed” is one of the most dangerous words in a hybrid business. Requirements research consistently shows that internal completion signals diverge from external usefulness signals most sharply at exactly this point, where approved becomes assumed rather than verified. It sounds objective, responsible, and final. In practice it often means something much narrower: the workflow reached its stopping point. The visible steps were satisfied. The machine stopped talking. None of that proves the output can survive contact with reality.

Completion Theater

Completion theater looks like productivity because it borrows the surface cues of disciplined work. Tasks close. Dashboards stay green. Milestones appear to move. Internal status language remains reassuring. The work seems to flow.

Then the rework bill arrives. And it arrives later, when the cost is higher and the explanation is uglier, because the delay is exactly what makes completion theater so dangerous.

If the work broke loudly and immediately, the system could correct itself faster. Instead, hybrid workflows often produce a quieter failure mode. The output leaves the system looking composed. The client sees it before the operator sees the flaw. The user works around it before the founder learns it solved the wrong problem. The correction arrives downstream, after the invoice has been sent and the relationship has absorbed the gap.

Every time completion theater wins, you are pre-authorizing rework you will not log honestly later. The workflow records productivity. The calendar records cleanup. The founder feels busy, the client feels uncertainty, and the real cost gets distributed across small fixes that are only small when measured one at a time.

That cost accumulates in a sequence most operators recognize only after they have run it once.

The 79% figure is not a warning about bad operators. It is a finding about where validation gets removed from the system. That removal is designed in, not accidental.

Why Hybrid Workflows Create This Gap

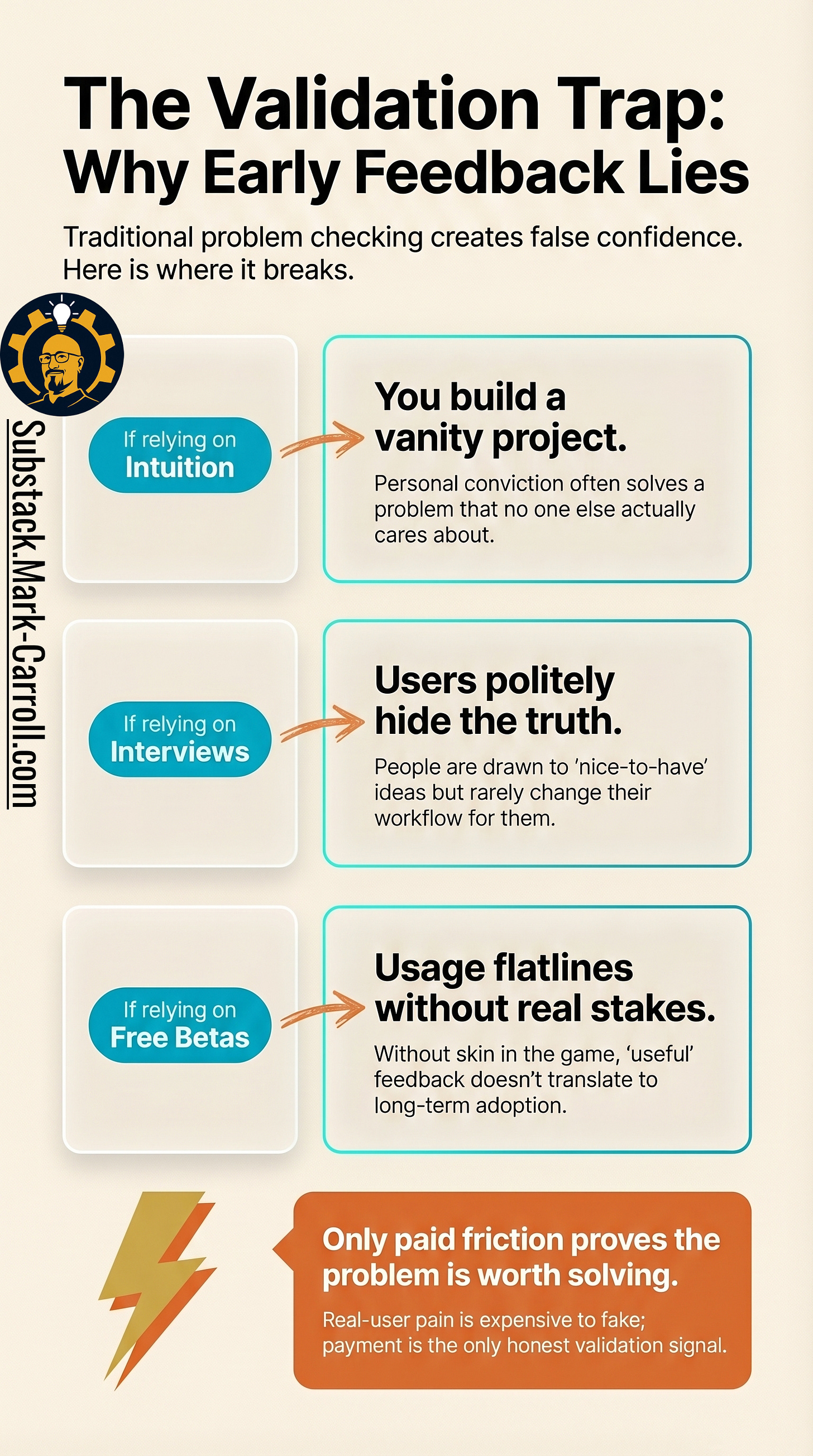

This is not a carelessness problem. It is a design problem. Better prompting helps at the surface level. Better communication helps. Better specifications help. Those things reduce the frequency of the gap. They do not solve the deeper one.

The deeper problem is that hybrid workflows are optimized to confirm internal completion conditions rather than external usefulness conditions. They are built to recognize when the visible sequence has run, not when the work has actually earned trust. Most operators begin by thinking they need clearer instructions. Then better prompts. Then more review. What they usually need is a different standard for what counts as finished in the first place.

Research across complex project environments consistently finds that defects caught after internal approval cost substantially more to fix than those identified upstream. The cost is not only time. It accumulates in trust erosion, silent workarounds, and the downstream labor that never gets logged honestly. Industry-wide analyses put the majority of rework cost at the requirements and validation layer, not the implementation layer. The solo version of that pattern is paid in weekends and client trust instead of budget lines.

The gap does not begin when the output is generated.

It begins upstream, in the validation methods used before any work is produced.