The Catastrophic Cost of Looking Safe

Profit With Proof | Episode 4

The Compliance Theater Budget

👋 Welcome to this week’s edition of Empathy Engine. Every Tuesday, I publish a new article for paid subscribers first, then unlock the full piece for everyone late Thursday morning. Each week, I turn product leadership friction into practical tools, sharper language, and more defensible decisions.

How to spot the controls you are paying for but never really test.

Research Binder: the receipts, citations, and source notes are compiled in a PDF at the bottom of this article.

What this article does and does not claim

Does: argue that there is a costly, measurable gap between control presence and tested effectiveness, and give you a local calculation structure you can carry into a budget conversation.

Does not: argue that audits are useless, that compliance teams are faking everything, or that one worksheet will make a vulnerable organization secure. It does not promise a precise guaranteed return on investment or an auto-computed incident reduction percentage.

This is a budgeting and governance argument about what your security money is actually buying, and how to spot the difference between an investment in resilience and a tax paid to optics.

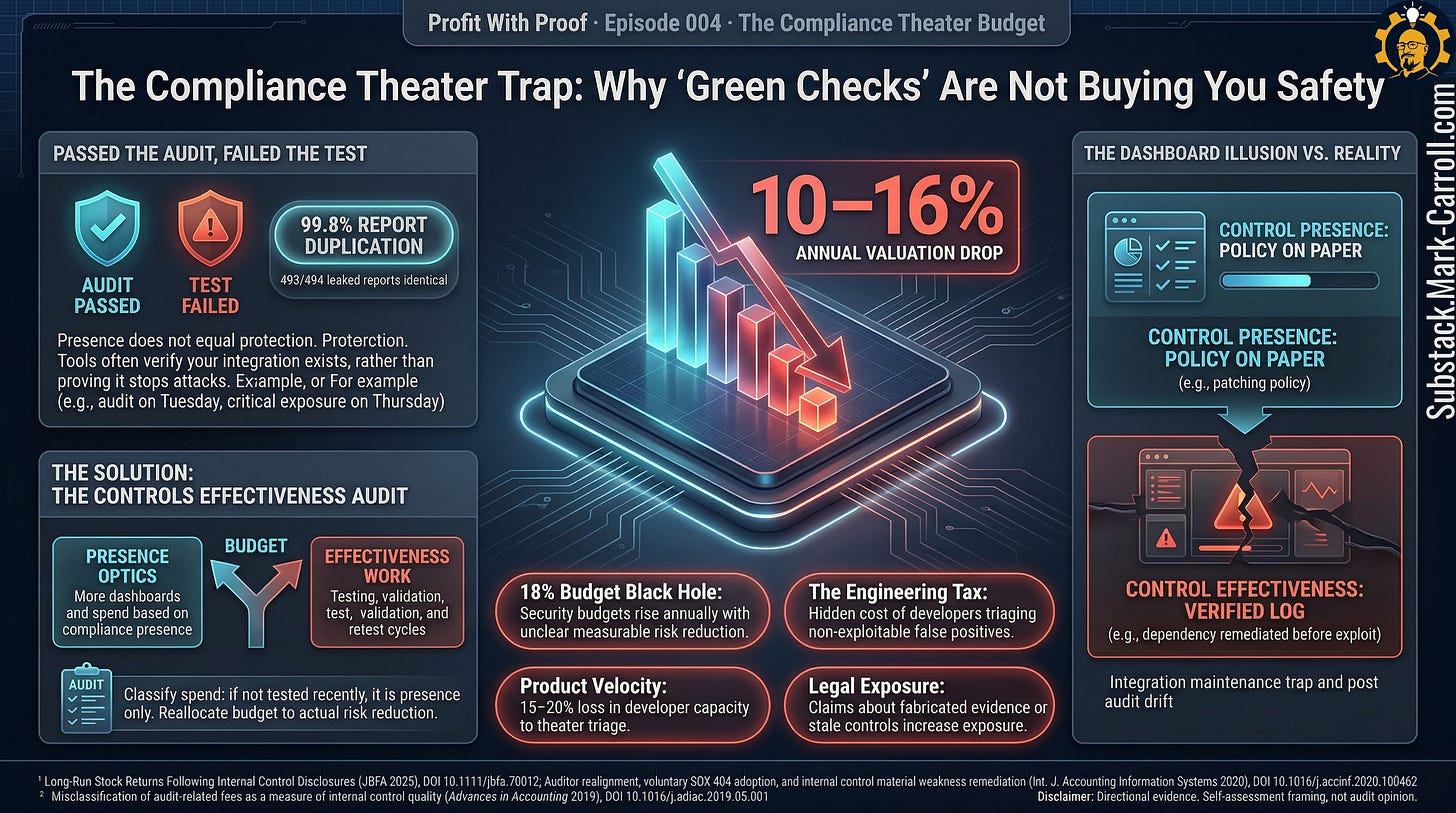

We passed the audit on Tuesday

The report was clean. The dashboards were green. The executive summary was written in the reassuring, sterile language of a successful compliance engagement. The organization had done the work, paid the fees, gathered the screenshots, and survived the scrutiny. Handshakes were exchanged. The enterprise deal that hinged on that SOC 2 certification was finally unblocked.

By Thursday, the pentest had found a critical vulnerability in production.

It was not buried in a forgotten sandbox. It was not sitting in a dusty internal tool nobody wanted to claim. It was in production, on a system people used every single day. It was a live, exploitable exposure sitting directly on the revenue path. The kind of unpatched dependency that can create a fast-moving production exposure before the organization realizes the control has drifted.

That is a brutal way to learn what your compliance budget was really buying.

Some version of this story plays out over and over: clean attestation, then an exposure that makes everyone realize they have been funding reassurance more than resilience.

The audit said the controls were there. The compliance dashboard confirmed the integrations were active. But the pentest asked a different question. It did not ask for a screenshot of a policy. It asked: Did those controls actually hold?

That gap between Tuesday and Thursday is the whole story.

The failure was not whether documentation existed. It did. It was not whether someone worked hard to get the organization into review shape. They did. The problem was quieter, more systemic, and more expensive than a simple oversight.

The organization had funded proof of control presence far more aggressively than it had funded proof of control effectiveness. They had purchased the optics of security without financing the validation of security. That is exactly how a budget starts looking responsible to the board while catastrophic risk quietly stays on the payroll.

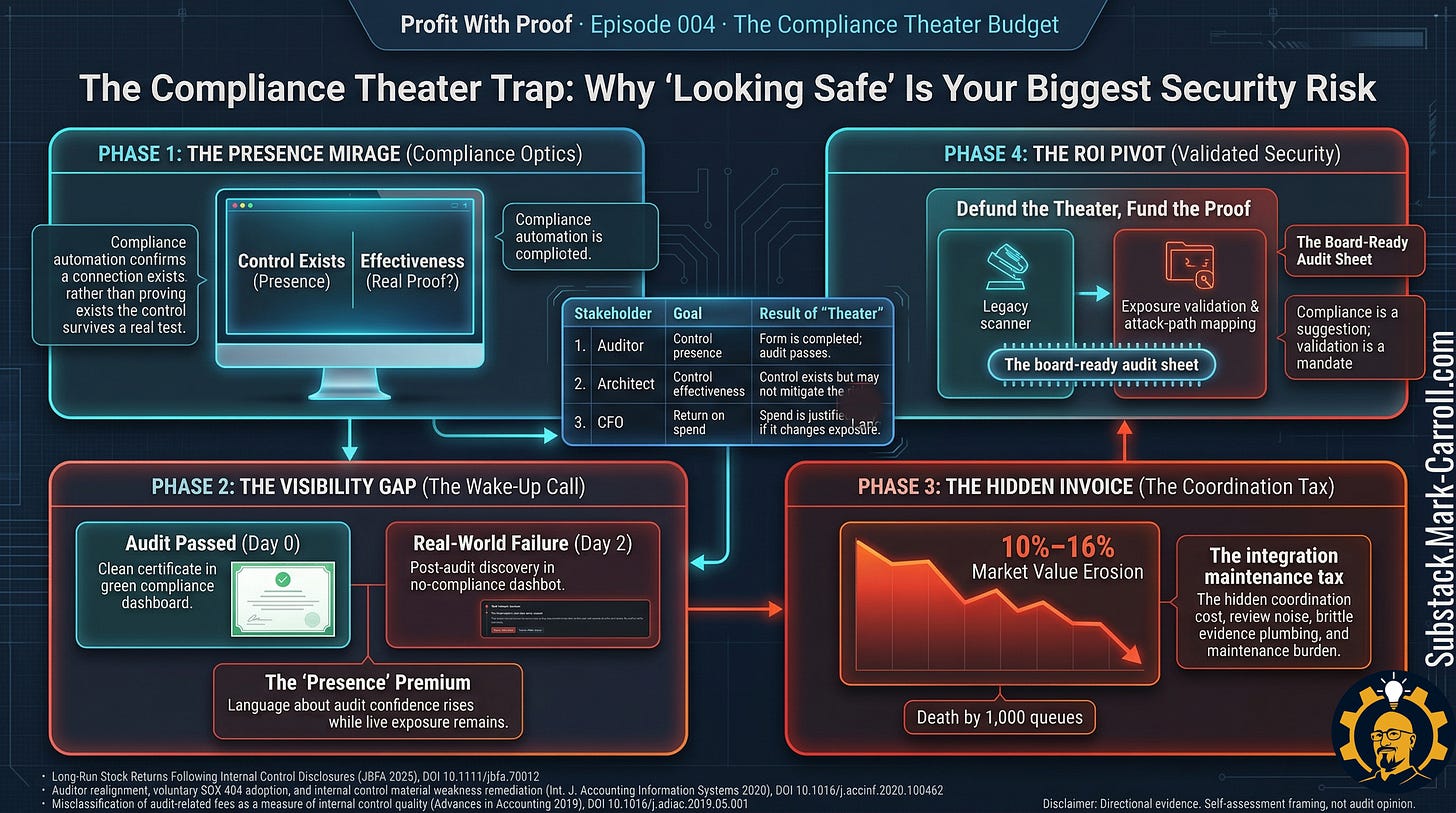

The system is optimized for the wrong outcome

The system is optimized for audit passage, not risk reduction. Passing the audit is measurable, time-bound, and tied to procurement decisions. Actual risk reduction is harder to measure and slower to demonstrate. So the rational organizational move is to invest in audit passage. The investment produces a certificate. The certificate is not the same as security.

Nobody in this system is lying. Nobody is careless. The audit framework rewards the production of evidence. Teams produce evidence. Finance renews the tools that collect it. The dashboard stays green. The control stays untested. The organization calls that compliance.

The budget distortion this creates is not a failure of intent. It is a failure of incentive design.

ARCHITECTS CAST

Designers: Compliance officers and security architects who define what “passing” means and build the audit framework that rewards presence over proof.

Inspectors: Internal audit teams and external auditors who verify that controls exist on paper and that screenshots can be produced on demand.

Enforcers: Enterprise clients demanding SOC 2 reports, regulators writing findings, and penetration testers who expose the gap between the certificate and reality.

The hidden cost: controls that exist but have never been exercised

Most leaders do not wake up hoping to spend heavily on optics. Engineering directors, product leaders, and CISOs fund controls because controls are supposed to reduce risk, support customer trust, and protect the infrastructure.

But as organizations scale, they begin to accumulate a quieter, heavier category of spend. Policy work. Evidence collection. Configuration snapshots. Renewal cycles for automated tools. Screenshots of user directories. Integration checklists. Dashboards designed to reassure the executive suite that something is in place.

That spend is not always waste. Some of it is necessary. Some of it is the unavoidable price of operating in B2B environments where enterprise customers, regulators, and auditors demand proof of maturity.

The trouble starts when the budget stops at presence.

A control can be present on paper and still fail entirely under stress. A control can be configured on Monday and quietly drift out of compliance by Friday. A control can appear flawlessly in a system of record, mapped elegantly to three international frameworks, and still go untested in the exact scenario that matters. A control can calm a room of executives while doing nothing to survive contact with reality.

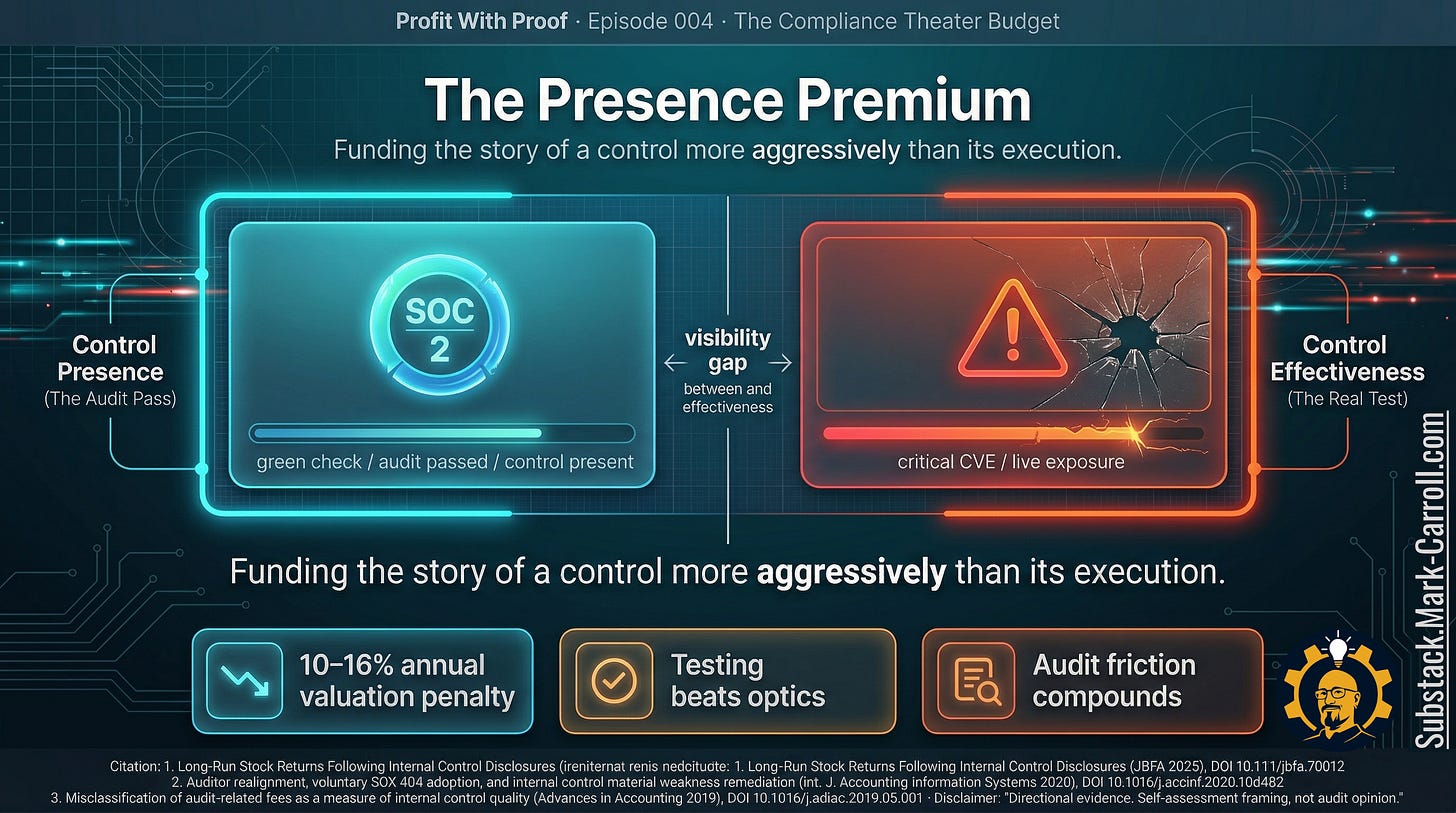

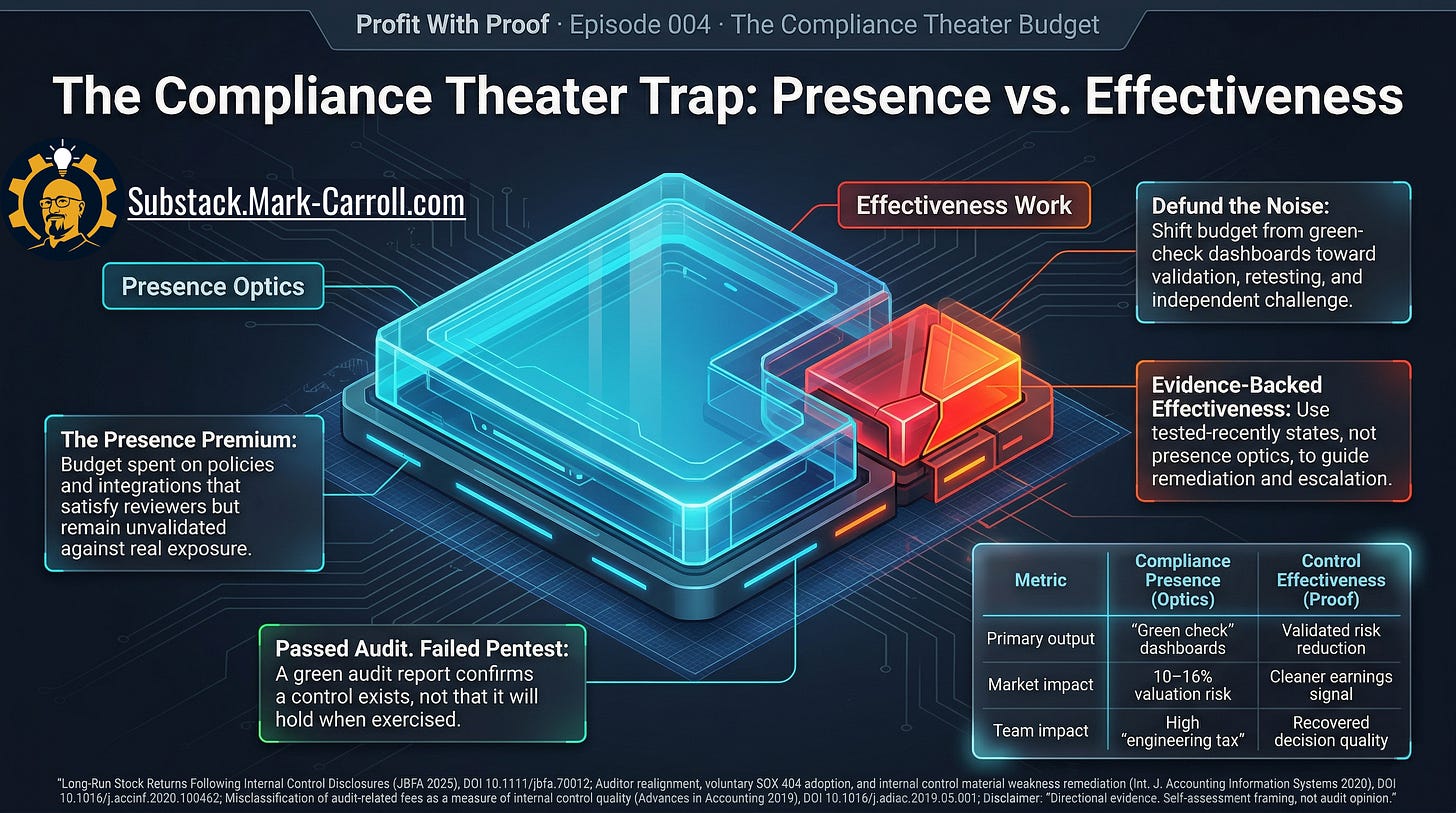

That is the Presence Premium. The capital and labor committed to controls whose existence is legible, while their actual effectiveness remains assumed.

Passed audit, live exposure

The passed-audit, failed-pentest sequence is legible to everyone in the business simultaneously.

The CISO sees the exposure and the immediate threat. The engineering leader sees the frantic scramble, the weekends burned and the sprint velocity destroyed to patch something that was supposed to be handled. The product leader sees the roadmap disruption, as feature development halts to accommodate emergency remediation. The CFO sees unplanned spend required to fix a problem they thought they just paid an auditor to prevent. The board sees the familiar, deeply unpleasant question: How did we have such a clean story right before we had such a messy reality?

That question is the whole ballgame. Because the moment it enters the room, nobody cares how many boxes were checked in theory. Nobody cares how the automated compliance dashboard looked on Tuesday.

They care which controls were actually tested. They care whether known weaknesses were remediated or swept. They care whether the organization can distinguish between a control that was present on paper and a control that had been exercised recently enough to deserve their confidence.

That is why this cannot be dismissed as a niche security complaint. It is a fundamental question of capital allocation: Is the business funding reassurance, or is it funding resilience? The damage of funding reassurance over resilience is cumulative. It always eventually comes due.

The continuous monitoring mirage

The problem is not the dashboards. It is using dashboards built to prove presence as if they had already proved effectiveness.

In many organizations today, what a continuous compliance dashboard tells you most clearly is that a control is connected, configured, documented, or being watched by an API. That is not meaningless. But it is not the same as knowing how that control behaves when a real edge case appears, when organizational ownership drifts, when an access exception granted six months ago is still quietly alive in the system, or when a threat actor behaves far less politely than the last auditor did.

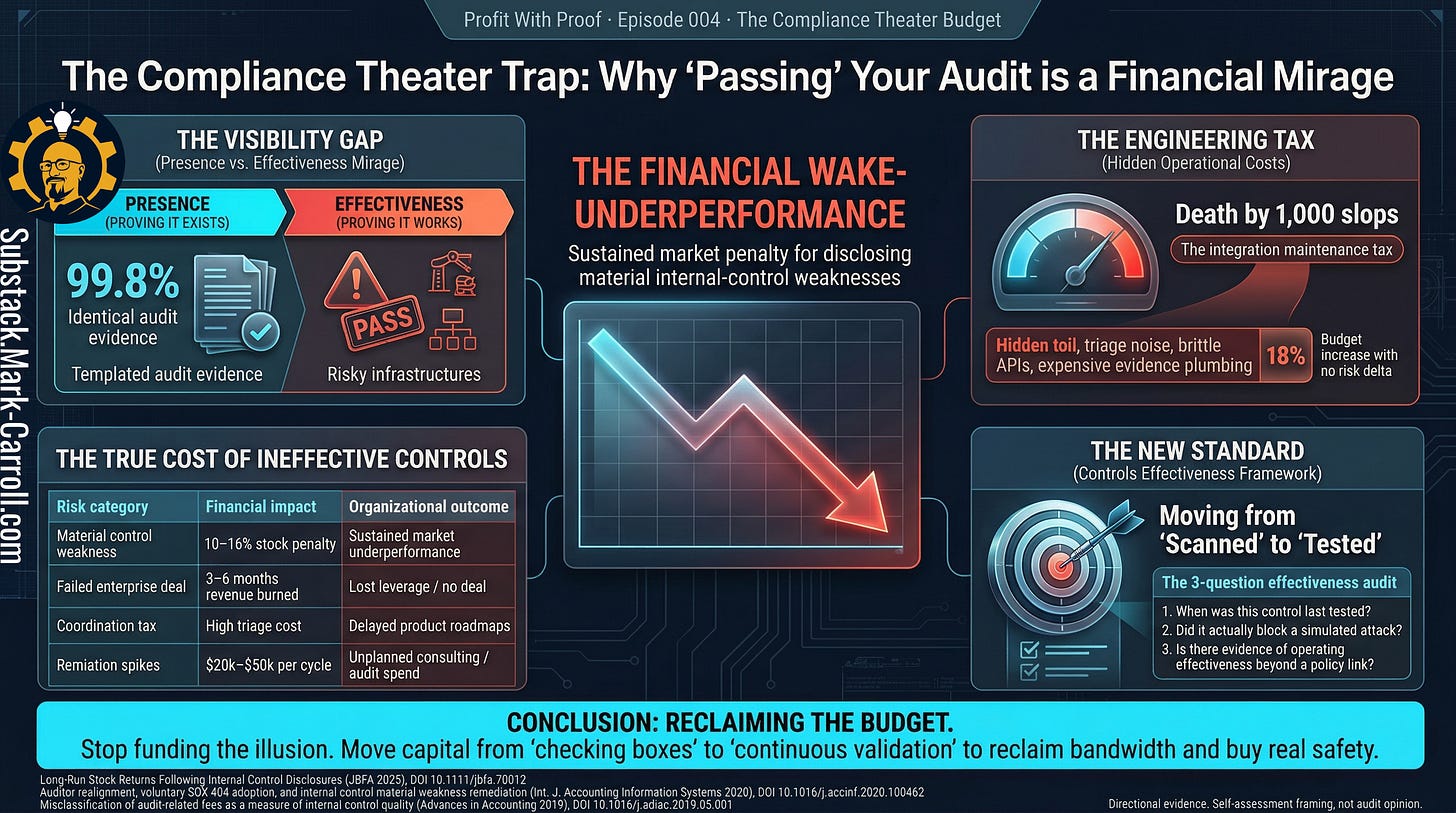

The dashboard might stay green for the auditor, but only because engineers are spending a disproportionate amount of time triaging low-context alerts, arguing over generic severity scores, and manually patching broken software integrations just to keep the compliance system of record happy.

This is the hidden Engineering Tax. When a control is present but ineffective, teams get staffed and directed around control failures rather than core product work. Engineering and product leaders pay a quiet tax on their product velocity to maintain the illusion of compliance.

That is how smart people end up with green dashboards and expensive surprises. Not because they are careless. Because the operating rhythm of the business rewards visible evidence of presence far more often than it rewards repeated evidence of effectiveness.

One is easier to collect. One is easier to defend in a budget meeting. One is easier to renew annually. The other requires testing, friction, ownership, retesting, and the occasional awkward discovery that a heavily-funded control has the structural integrity of decorative railing.

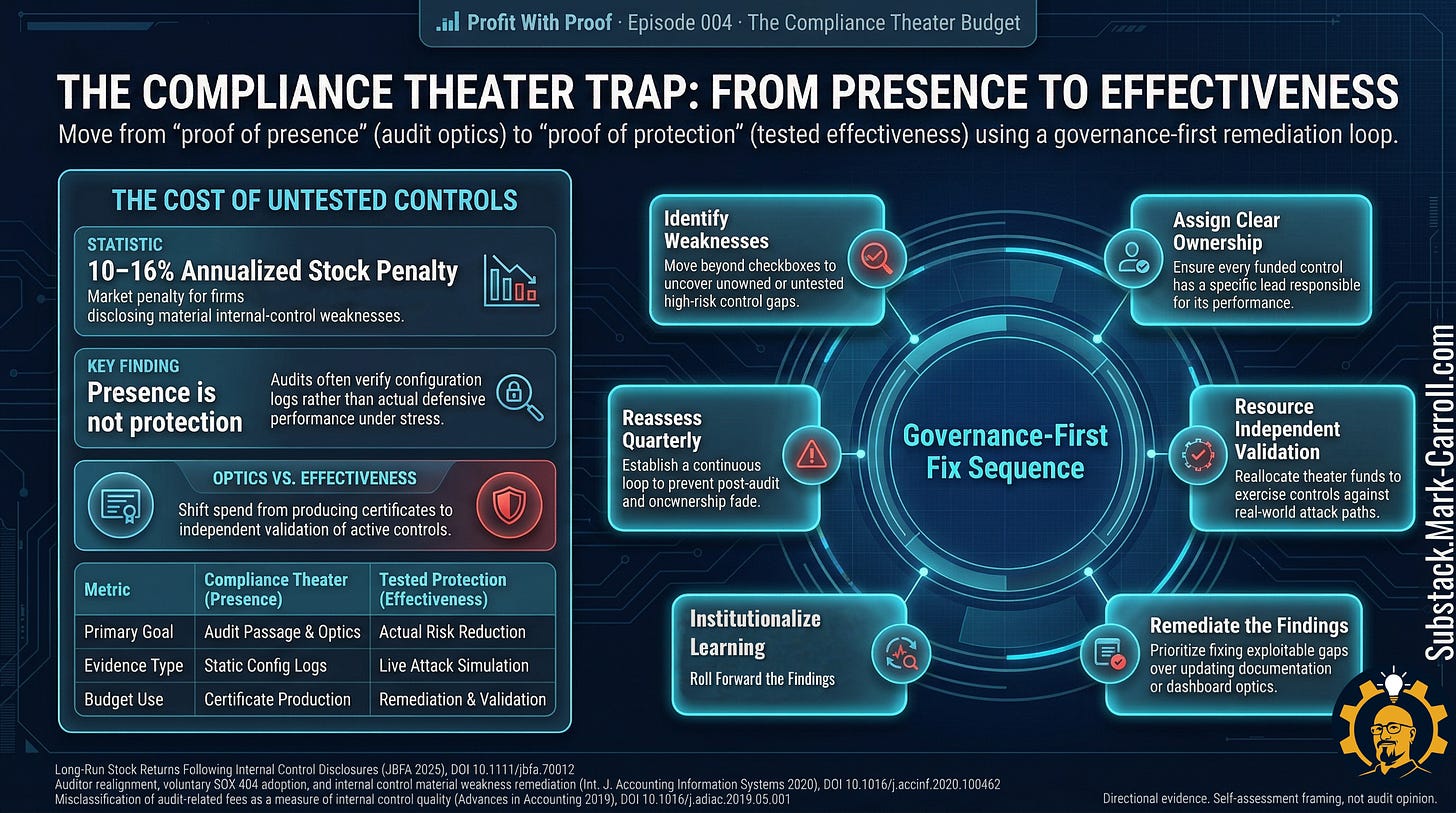

The governance gap hiding inside the budget

When a control remains weak for too long, the problem is rarely just a technical failure. Usually, the organization already knows something is off. A minor finding was logged in a previous quarter. A remediation item was parked in a backlog because it lacked a champion. A dependency changed and someone noticed but did not escalate. A policy exception stayed open because closing it required cross-departmental conflict. One team assumed another owned the next phase of the test.

A leader assumed the green dashboard meant someone else had already verified the underlying risk.

This is another broken route problem. The signal existed. The route between the signal and the decision did not.

This is where the budgeting story becomes a governance story.

Weak controls persist when no one formally owns their validation. Weak controls persist when remediation has no dedicated budget line. Weak controls persist when review cycles reward the production of evidence more than the production of challenge. Most dangerously, weak controls persist when it is politically easier to report presence than it is to admit uncertainty.

That is why the most useful corrective sequence is governance first. Identify the weakness. Assign clear, unavoidable ownership. Resource the validation of the control. Execute the remediation. Reassess on a recurring basis.

It is not glamorous. It will not trend. But it is more useful than buying another tool to monitor a broken process.

Where the money really goes

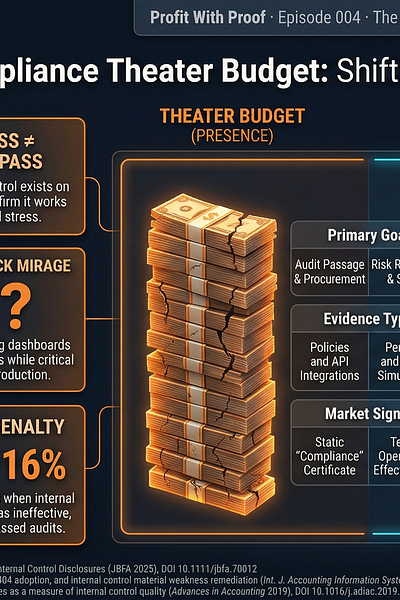

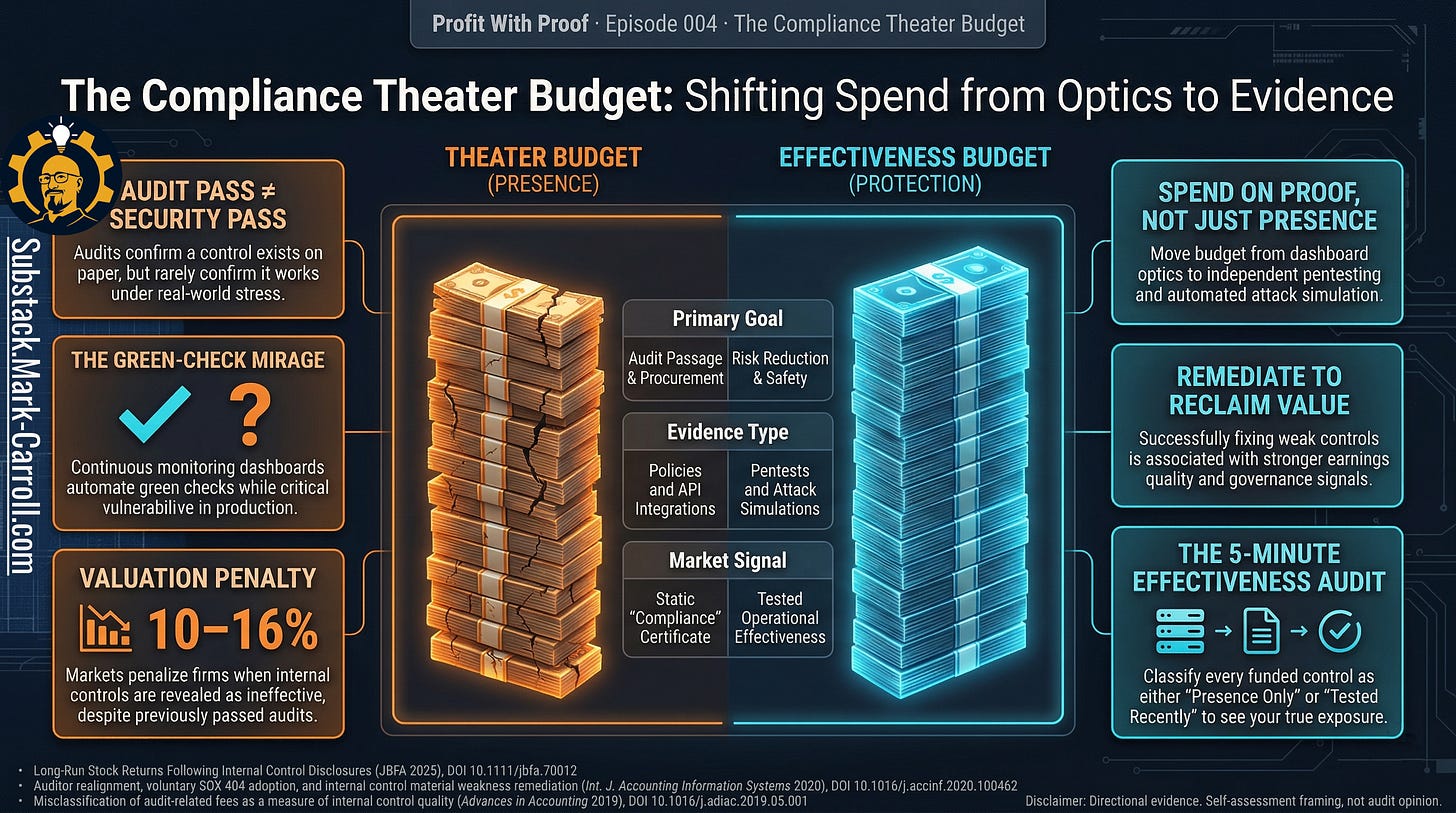

Once you start looking through the lens of presence versus effectiveness, the budget distortion becomes impossible to unsee.

Money goes to aggressive renewals that keep automated evidence flowing. Money goes to external consultants who help the organization stay audit-ready. Money goes to internal labor spent grooming artifacts, reconciling control descriptions, maintaining screenshots, and preparing for external reviews. Money goes to the annual five-figure manual pentest that delivers a 147-page PDF of theoretical risks just to satisfy a vendor questionnaire, regurgitating findings your internal tools already knew about.

Meanwhile, the budget for independent validation, targeted retesting, automated exposure validation, or serious follow-through on known attack paths is smaller, delayed, fragmented, or quietly absorbed by engineering teams already underwater.

That is the compliance theater budget. Not because all of it is fake. But because far too much of it is optimized to make control presence legible, while leaving control effectiveness critically underexamined.

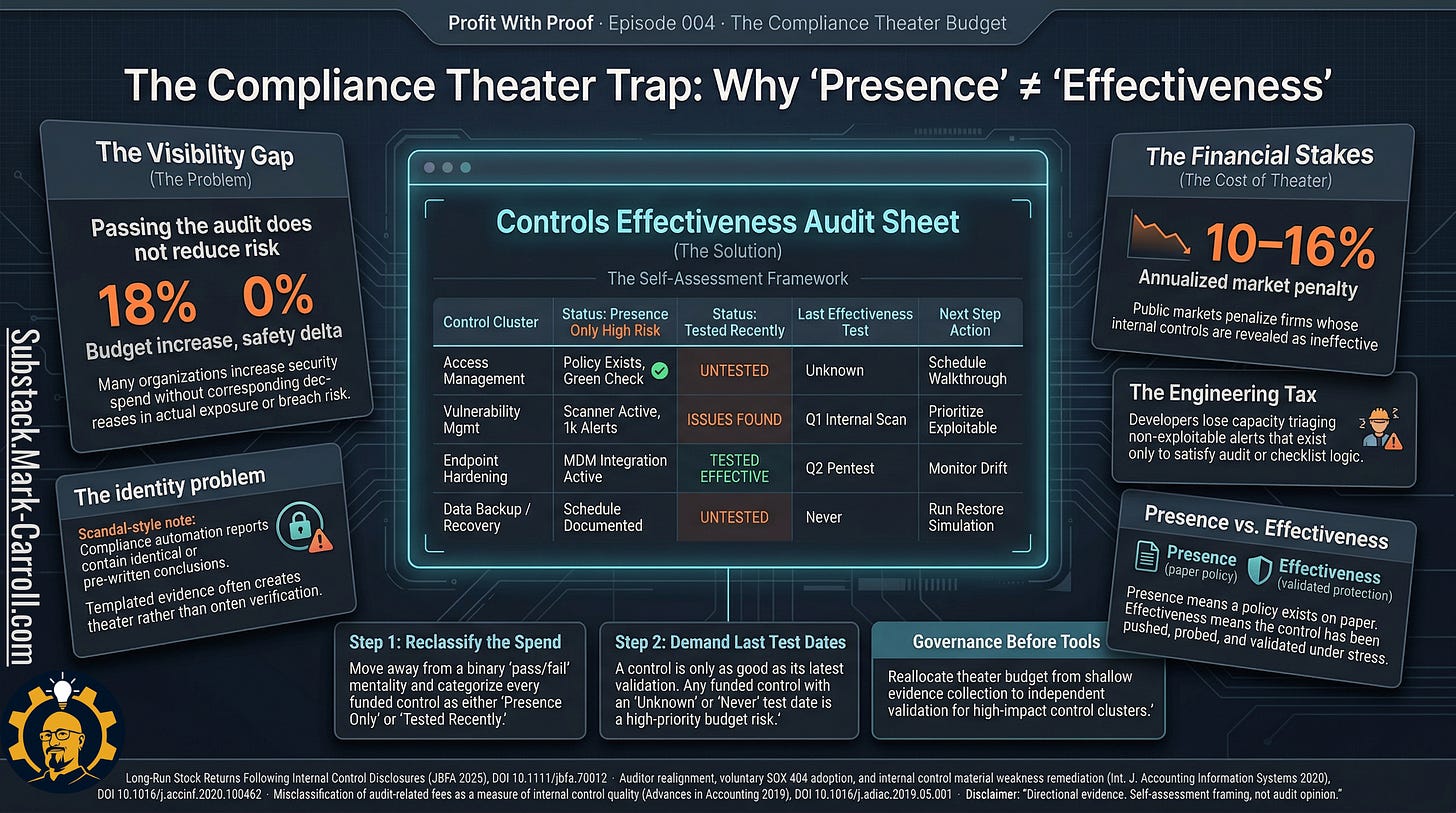

The financial risk of this imbalance extends well beyond the security and engineering departments. Financial research shows that firms forced to disclose material internal-control weaknesses underperform the market by roughly 10 to 16 percent annually in the years following disclosure. The capital market does not reward the presence of a beautiful dashboard. It imposes a sustained valuation penalty on organizations that fund controls that do not actually work.

For a CFO or board, higher earnings quality means cleaner audits, less investor friction, and a stronger governance narrative. Investing in effectiveness is not just a security best practice. It is a measurable financial advantage. Firms that actively remediate internal control weaknesses demonstrate stronger earnings quality and governance performance than those that leave weaknesses open.

The expensive surprise is not that the organization spent money on compliance. It is that the spending pattern created confidence significantly faster than it created proof.

How to calculate your own compliance theater budget

Start with two numbers, not one opinion.

Compliance demonstration budget includes: audit preparation labor, evidence collection and maintenance labor, GRC or compliance platform annual renewals, external consultant support for audit readiness, and documentation work tied to control presentation.

Active controls testing budget includes: penetration testing, attack simulation, exposure validation, retesting after remediation, and labor specifically directed at proving a control still holds.

Presence-to-Effectiveness Ratio

= Compliance demonstration budget

÷ Active controls testing budget

If you cannot calculate this ratio in under 30 minutes,

that is not a reporting inconvenience.

That is the finding.

If your organization spends $420,000 a year on audit preparation, evidence tooling, consultant support, and compliance reporting, and $90,000 on penetration testing, attack simulation, retesting, and control validation, your ratio is 4.7 to 1. You are spending nearly five dollars proving controls exist for every dollar testing whether they work.

If 11 of your 26 funded controls have not been effectiveness-tested in the last twelve months, then 42 percent of your funded control estate is currently being carried on presence rather than recent proof.

Your numbers will differ. The structural question will not.

Now ask the harder question. What is one funded control your organization currently describes as protective that has not been effectiveness-tested in the last twelve months? If you cannot name one, you probably have more than one.

The verdict

Your core problem as an engineering or product leader is not that you have no controls.

Your problem is that you cannot quickly, confidently tell which of your funded controls are merely present and which have been tested recently enough to deserve your confidence.

That is the split that changes the entire meaning of your security spend. Without that distinction, you are not managing a control environment. You are managing the appearance of one.

What to do next

Do not start by trying to score every control in the enterprise. That way lies spreadsheet folklore and the slow death of everyone’s will to act.

Start with the five-minute executive drill.

Pull your top 10 funded controls by spend or downside exposure.

Mark whether each is present. Does it exist on paper and in the systems?

Record the last effectiveness test date. When was this control last forced to prove it worked?

Note how that test was performed. Automated ping, manual walkthrough, or simulated attack?

Tag each control: presence only, tested recently with issues found, or tested recently with no major issues.

Circle the three controls with the highest downside and the stalest testing history.

Those three controls are your next quarter.

Then combine the tagging with your ratio calculation. If your Presence-to-Effectiveness Ratio is above 3 to 1, your budget is currently structured to reassure more than to protect. That is the number worth bringing to the next QBR.

This is not a replacement for formal audits. It is what you should be doing between them. It is how you avoid discovering, at the worst possible moment, that your cleanest most highly funded controls were the ones least likely to survive contact with reality.

For subscribers

If the public article gives you the diagnosis, the paid sheet gives you the meeting language.

Subscribers receive the Controls Proof Ratio Calculator. It separates the compliance budget that is reducing risk from the budget that is producing certificates by turning your numbers into a single, defensible Proof Ratio: demonstration dollars for every effectiveness dollar.

Instead of a flat checklist, you get a small workbook. One tab for Budget Inputs, one for your Control Inventory, one for an Executive Summary strip, and one for a Heatmap & Priorities view you can drop into a slide. Together they calculate your demonstration spend, effectiveness spend, test coverage rate, verified control rate, cost per tested control, cost per verified control, and the Presence Premium still stranded in untested controls.

Designed to be opened and understood in under five minutes, the summary tab is built to be screenshot or forwarded to a CISO or CFO as a budget‑prioritization argument. The priorities view ranks your controls by cost, risk tier, test recency, and recent failures so you can say, “These are the three controls we should validate next, given what we already spend.”

Unlike earlier Profit With Proof artifacts, this one is not a simple cost calculator. Its job is to give you a forwardable, board‑safe snapshot of how your current control spend breaks down between optics and tested effectiveness, so you can attach your own local incident and exposure numbers to the right clusters. It is a self‑assessment and planning tool, not an audit opinion or proof of security.

That is the conversation this workbook is built to support: Where are we still spending money on controls we cannot honestly say we have exercised recently enough to deserve our confidence?

The closing point

The mature question is no longer “Do we have controls?”

Plenty of organizations can answer that. The harder, more useful question is “Which of these controls have we actually exercised recently enough to deserve our confidence?”

If you cannot quickly name the ratio between your compliance demonstration budget and your active controls testing budget, that is not a documentation gap. That is the finding.

And if you cannot name one funded control that has not been effectiveness-tested in the last twelve months, the theater is probably bigger than the dashboard admits.

That is the question that turns control spend into something more valuable than optics. That is the question that gives your next audit, your next pentest, and your next boardroom conversation a fighting chance to stop contradicting each other.

That is also the larger case behind my upcoming book, Collaborate Better. Better collaboration is not about being nicer in meetings. It is about reducing avoidable friction before it turns into waste, delay, and preventable cost. When systems fail to communicate, organizations absorb the damage. Collaborate Better is the manual for stopping that human and financial bleed. Learn more at CollaborateBetter.us.

Next week in Part 5: The Integration Penalty.

P.S. If you could move only three controls from presence only to tested recently this quarter, which three would you pick, and what exactly has stopped you from testing them already?

Regards,

Mark 👋

Previous:

More Content to Discover:

This is the next piece in my Profit With Proof series, and the full article goes live late Tuesday morning here on Substack.

Question for you before it drops:

What is one control, dashboard, or recurring report in your world that makes leadership feel safe, but that you are not fully convinced has been tested enough to deserve that confidence?

Curious where you’ve seen the gap between looking protected and being protected.