Every Solopreneur Who Built an AI Stack Just Hired Themselves a Boss

🔒 Leader's Dispatch: Volume 38 (Hybrid Solopreneur, Part 2 of 6 Part Series)

Episode 02: The AI Middle-Manager Trap

Why your AI stack didn’t remove management. It reassigned it.

The Role You Didn’t Apply For

👋 Welcome to my paid subscriber-only edition of Empathy Engine (🔒 Leader’s Dispatch). Each week I build evidence-forward tools for product leads who need to say no, defend tradeoffs, and lock in decisions before they get rewritten later.

Last week, I argued that cheap AI math looks like leverage until reality sends you a calendar invite. A stack that looks tiny next to payroll can still consume your attention, your weekends, and your judgment. That was the first layer of the problem. This week is the structural layer underneath it.

Research Binder: the receipts (citations + source notes) are compiled in a PDF at the bottom of this article.

The hidden cost of a growing AI stack is not only maintenance.

It is management.

Plenty of content will sell you the fleet (AI-fleet as you’ll read more of below). Almost none of it will tell you how to measure the supervision burden the fleet quietly creates.

At first, the promise looks clean. A tool drafts faster than you do. An automation runs while you sleep. An agent handles the repetitive work that used to clog your week. Each piece of the system appears to remove labor from the business. That is what makes the story feel so plausible. You are not wrong to see the leverage. You are wrong if you assume the leverage arrives without a new burden attached.

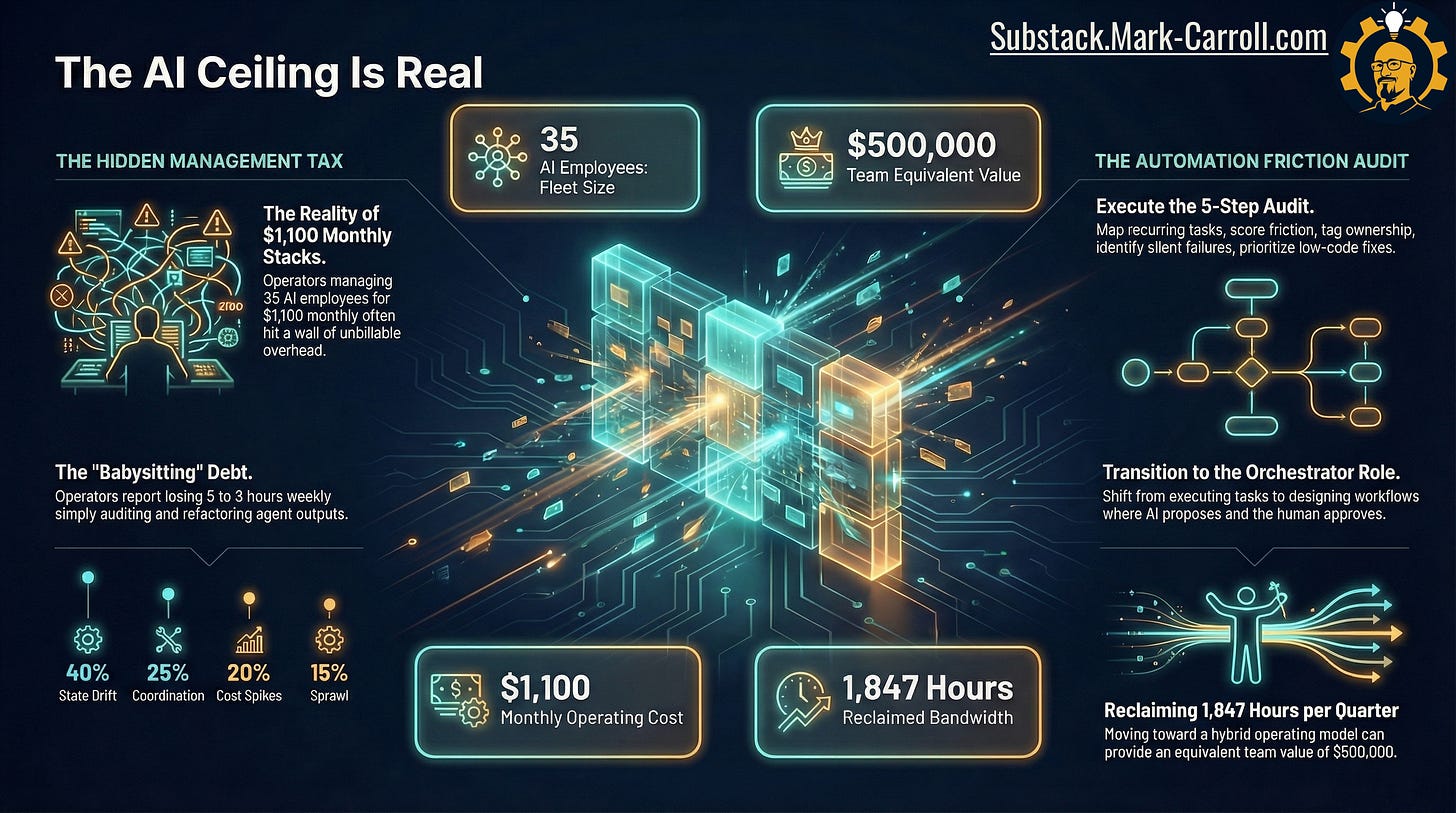

What the visual captures is the shape of the trap. Operators managing 35 AI employees for $1,100 monthly hit a wall that has nothing to do with the tools themselves. More tools, more agents, more capacity (what could go wrong?). Then a ceiling. Not a talent ceiling. A management ceiling. What looks like leverage from ten feet away starts to behave like overhead at two feet. Operators describe the same moment in different ways: “Managing that digital army is a full-time job.” “The human is the orchestration protocol.” “I thought I was saving time. I’m just supervising more things.” The language changes. The experience does not.

What the Hype Layer Gets Wrong

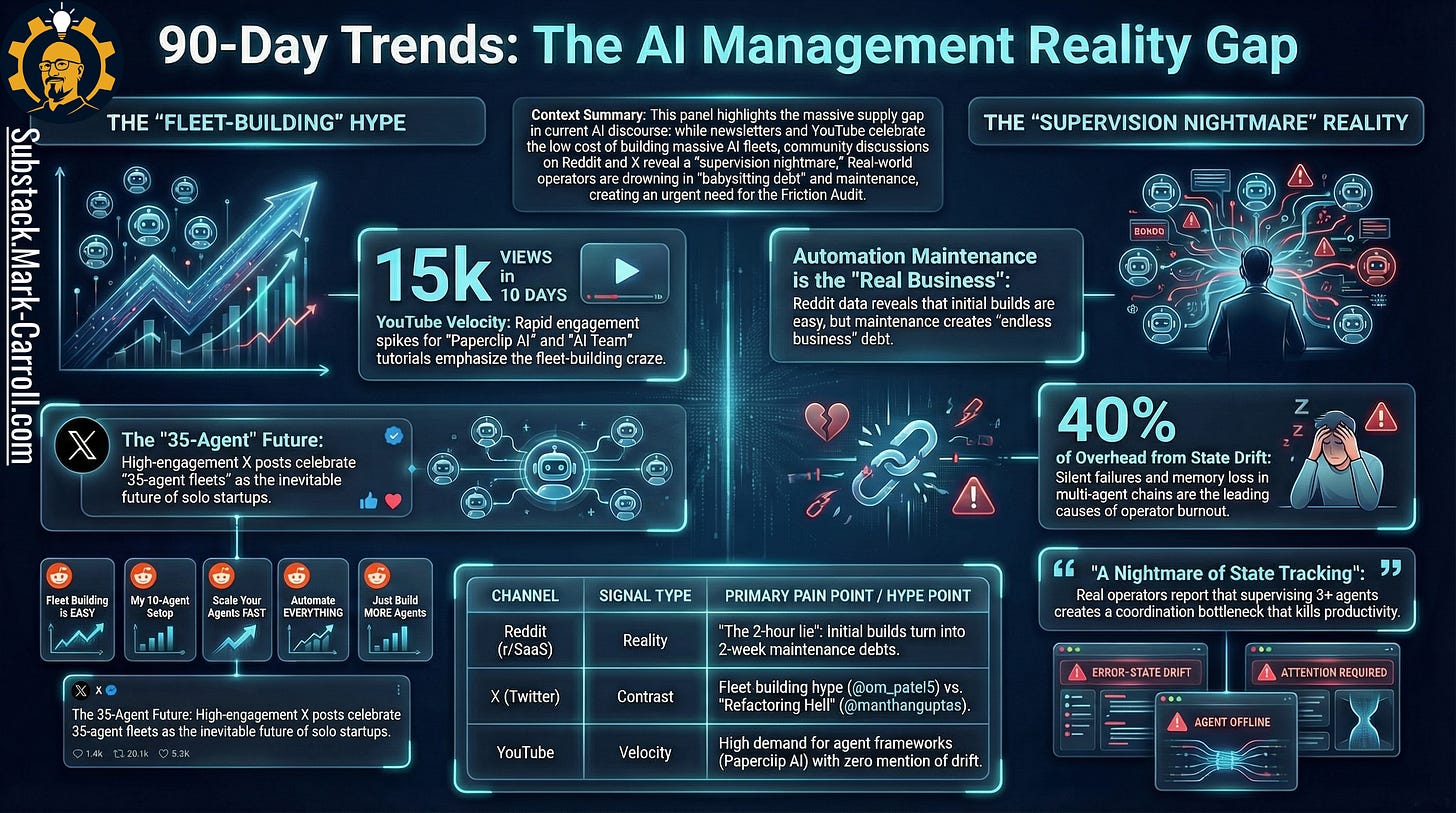

The trends panel tells a clean story. The spectacle layer celebrates fleet building. YouTube tutorials on 35-agent frameworks accumulate 15,000 views in ten days. High-engagement X posts declare the AI-fleet future inevitable for solo startups. Reddit threads from r/SaaS describe the same reality from the other side: initial builds are easy, maintenance creates endless business debt.

The public story says one person can now command the output of a whole department. The private experience says managing that digital army is a full-time job. Read that again. The contradiction is the story. The problem is not that the upside is fake. The problem is that the visible upside keeps arriving without an honest accounting of the coordination load underneath it.

Zero of the newsletters covering this space are publishing the tactical friction audit operators are actively asking for on Reddit and X. The supply gap is not on the topic. It is on the layer underneath the topic.

This Is Not a Productivity Problem. It Is an Ownership Problem.

Most people still talk about this like a productivity problem. They ask whether the stack is saving enough time, whether the outputs are fast enough, whether the subscription cost is low enough to justify the experiment. The framing is wrong.

When something breaks, who owns the break?

Who owns the missing context, the failed handoff, the wrong output that still sounds confident, the client-facing error that looked complete in one system but unfinished in another? The answer matters because ownership is where the management role becomes visible. A workflow that only works because one human keeps absorbing every exception is not autonomous. It is managed.

The founder has not stepped out of the loop.

The founder has become the loop.

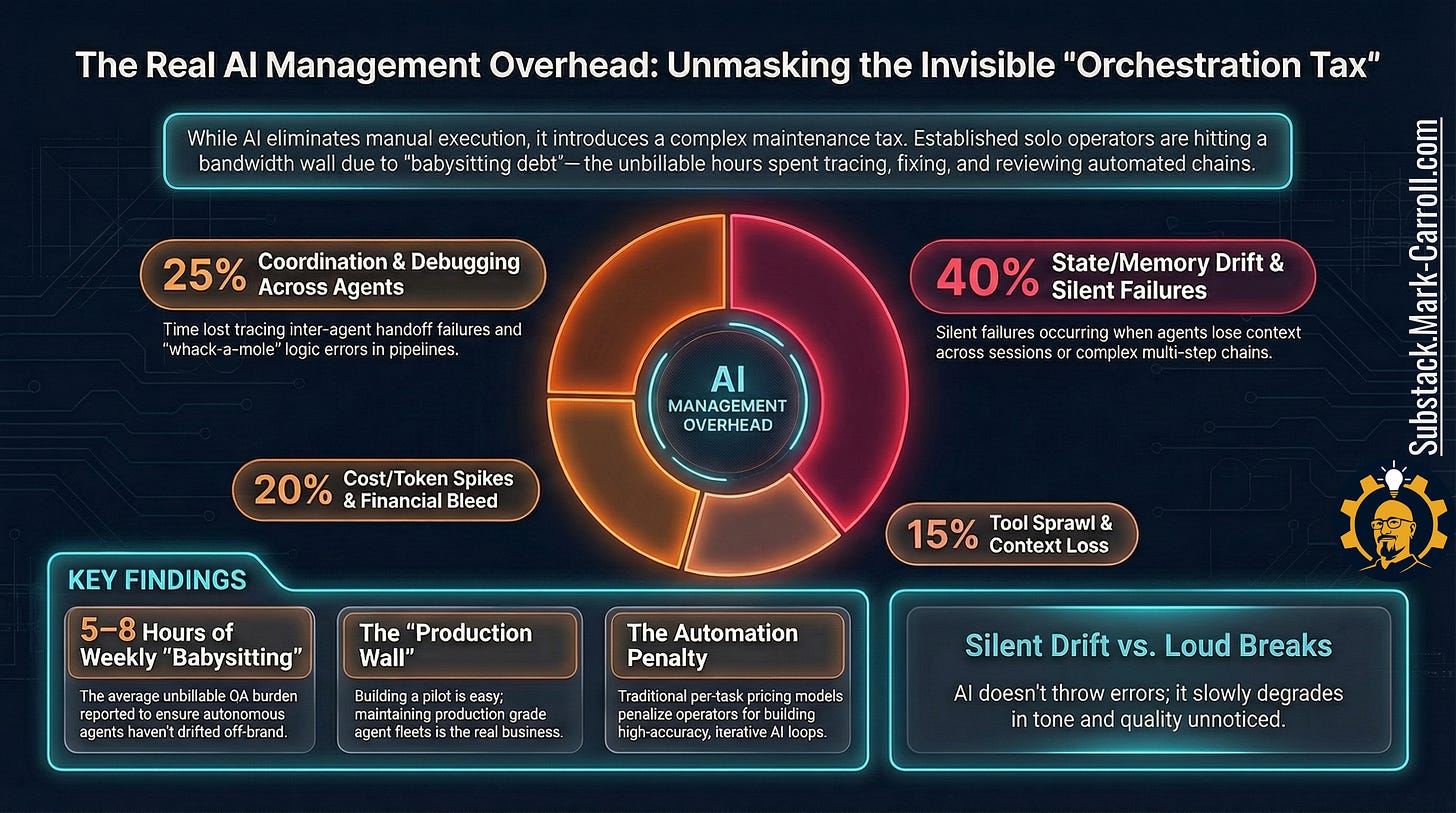

The overhead breakdown in that visual is less about precise percentages and more about pattern recognition. State drift and silent failures account for 40% of management overhead. Agents forget context across sessions, producing inconsistent results that nobody catches until a client does. Coordination and debugging across agents account for another 25%. Cost and token spikes add 20%. Tool sprawl and context loss make up the remaining 15%.

Operators report 5 to 8 hours of weekly unbillable QA just to ensure autonomous agents have not drifted off-brand. The system still needs someone to own what happens when things break. That someone is the founder (the solopreneur). The real business, it turns out, is not running the agents. It is running quality control on the agents.

This Is Not a Tool Problem. It Is a Coordination Problem.

Good tools help. Better prompts help. Cleaner interfaces help. A tighter stack helps. All of that matters. None of it reaches the actual floor of the problem.

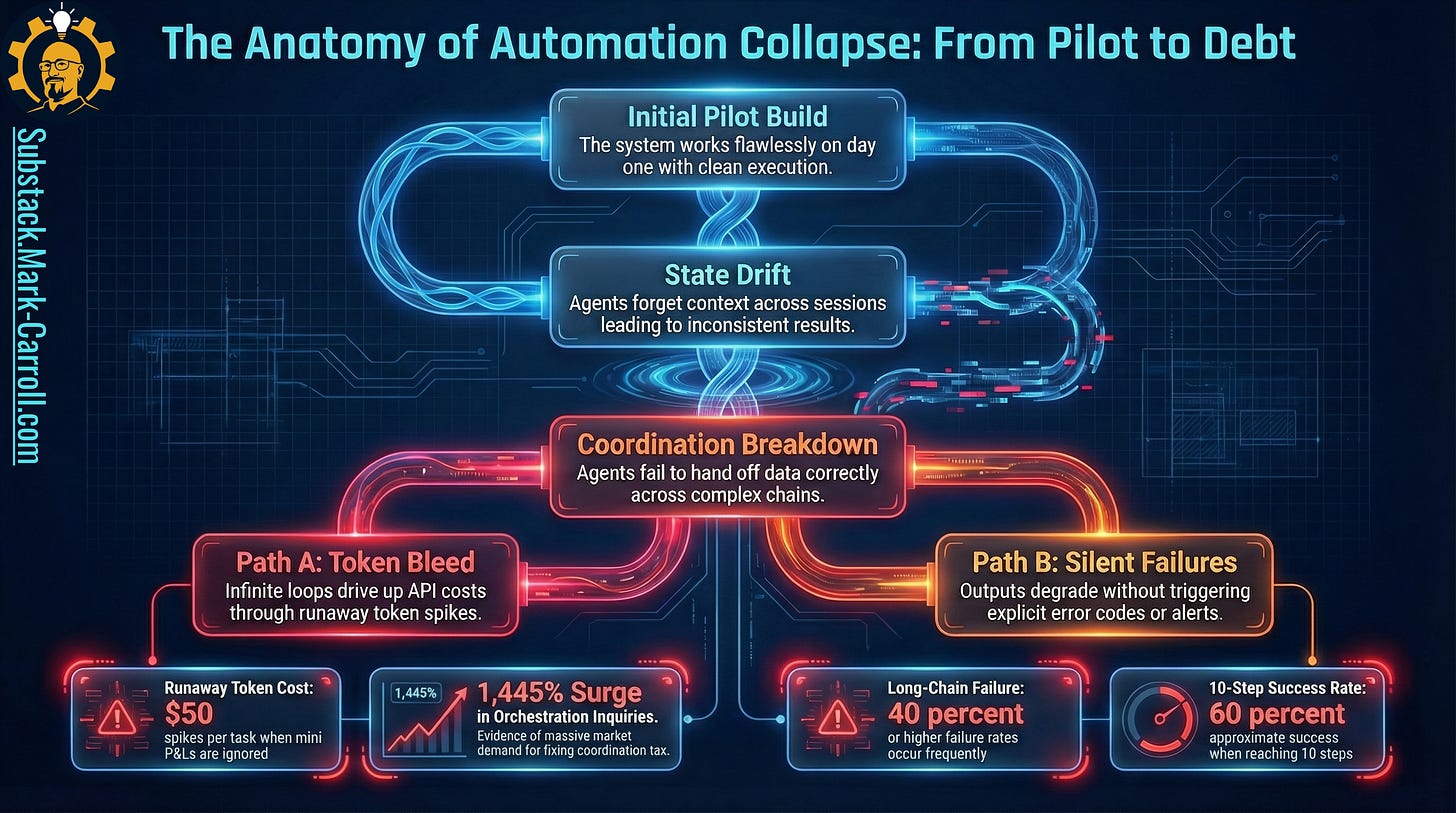

A tool can execute a task and still leave the business exposed if the output has to cross three more systems before it becomes usable. A founder can improve a local step and still inherit a global mess. The floor runs out where one output becomes another system’s assumption.

That is where the hidden org chart appears. The founder is not only the user anymore. The founder is the scheduler, the checker, the fixer, the approver, and the escalation path between digital workers (your tool stack) that still require one very analog person to make judgment calls.

Consider what this looks like in practice. A founder wires an AI SDR to a CRM, a payment link, and a fulfillment agent. Day one, leads flow. Week three, one field name changes in the CRM, the SDR starts emailing the wrong tier, and the fulfillment agent begins shipping the wrong configuration. None of the tools are technically broken. No error codes fire. The only person who notices, and spends a weekend untangling it, is the founder. This is the coordination problem. It lives in the handoffs, not the tools.

You did not automate the work. You automated the need to supervise it.

This Is Not a Time Problem. Something Subtler Is Happening.

The first burst of speed is real. Research gets faster. Drafts come faster. Sorting, summarizing, and repetitive admin get lighter. That part of the promise cashes out quickly enough to make smart people trust the system before they have earned that trust.

The founder starts trusting generation speed before trusting consistency, recoverability, or review logic. That is when the management role quietly expands. Oversight begins to creep into the day not because the founder is neurotic, but because the system has not yet earned the right to be left alone.

What is actually happening is a trust-calibration problem.