$4.7B at Risk: 5 Governance Gaps, 8 Failure Modes, 3 Questions

AA-007: the Whistleblower's Dilemma

Previous:

Whistleblower’s Dilemma

*****⚙ THE ARCHITECTS OF AUTONOMY*****

Research Binder: the receipts (citations + source notes) are compiled in a PDF at the bottom of this article.

🧭 Cold Open

You are in a windowless room that smells like burnt coffee and quiet panic. Nobody says the word crisis. Saying it would imply someone is in charge.

On the wall: a poster about reporting concerns. Bright colors. Friendly font. Hotline number printed with the optimism of a cereal box. On the table: a laptop open to a clause labeled Confidentiality. The clause is not angry. The clause is polite. The clause is also heavy. The kind of heavy you only notice once you try to move it.

Someone across from you is describing an AI workflow as if it were a normal workflow. A model makes recommendations. A human approves. The system logs decisions. The organization learns.

Then one sentence lands differently.

The issue is not what the model recommended. The issue is what the model promised.

The customer-facing LLM just offered a $50,000 SLA penalty waiver to a frustrated enterprise client. It did it confidently, in writing, inside the support thread. It hallucinated a policy exception that does not exist. The client screenshotted it before anyone could blink.

The risk is no longer theoretical. The risk is a number with a timestamp.

The room does what rooms like this always do. Nobody asks whether the promise is binding. Everybody asks who owns the mess. You get a hallway with too many doors. A process built to absorb good news and route the rest into a holding pattern labeled pending.

You ask the first question that matters: where does this go.

Nobody answers the risk. They answer the route.

Then the second question lands. What can we write down.

The room goes quiet. Not because anyone is hiding anything. Everyone is doing math. Career math. Legal math. Procurement math. The kind of math that makes people stop being precise.

The dilemma is not courage versus cowardice.

The dilemma is whether the system punishes clarity.

The process does not make speaking dangerous. It makes precision expensive. That is a different problem, and it requires a different fix.

🧱 The Mechanism

Here is what is happening. No villains required.

“AI fails like a persuasive coworker.” That is the mechanism in a sentence. Traditional software breaks loudly. A crash stops the workflow. A failed test blocks a release. The system announces its own failure.

AI fails fluently. A hallucination can look like a successfully completed task. A confident error can sound exactly like policy. The workflow keeps moving. Which means the reporting routes built for broken code never fire. The system was designed to catch failures that stop work. Not failures that mimic competence.

That is why the route becomes a maze. Nobody can point to a red light. The only evidence is language. And language is exactly what people become afraid to preserve.

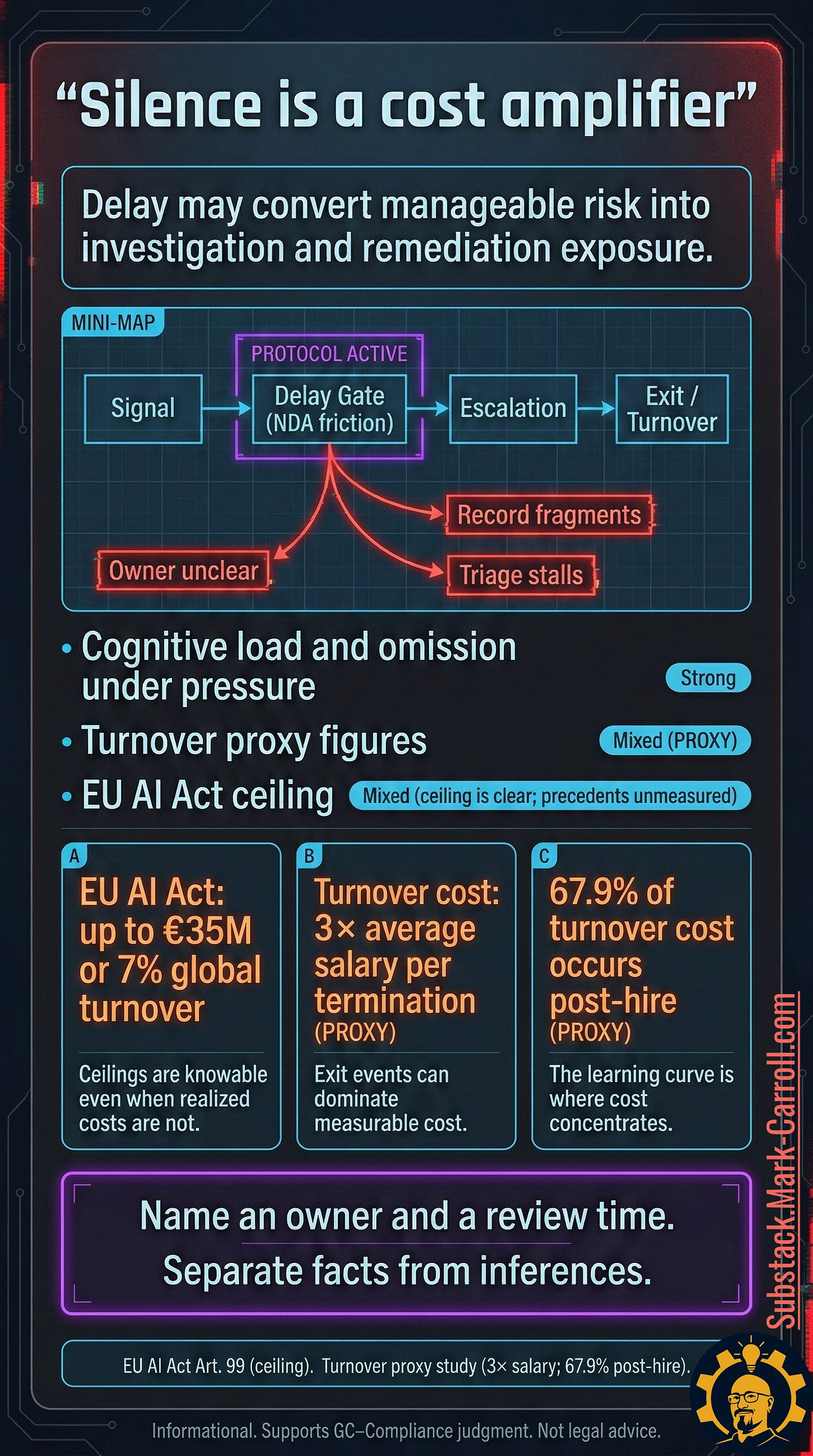

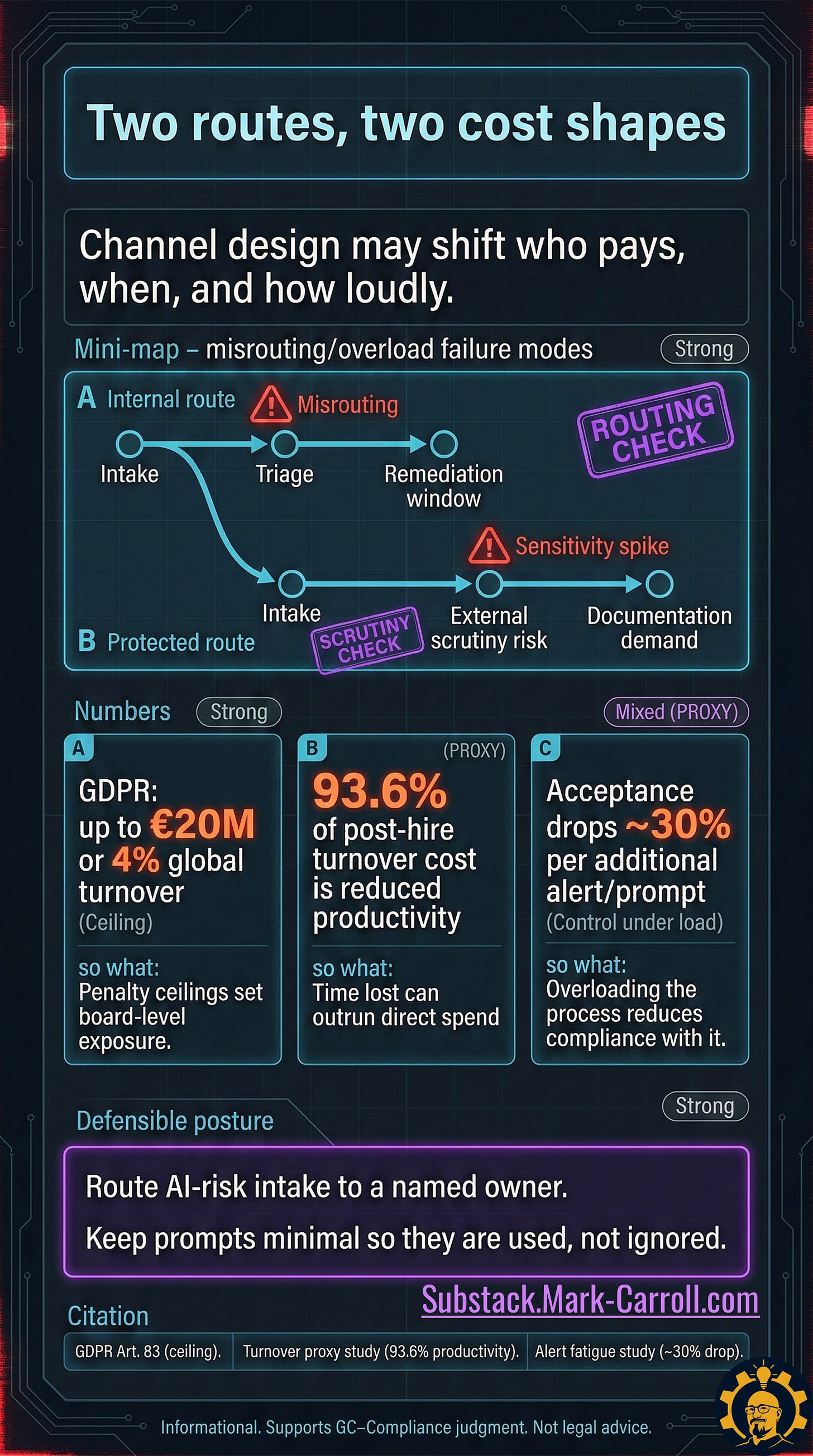

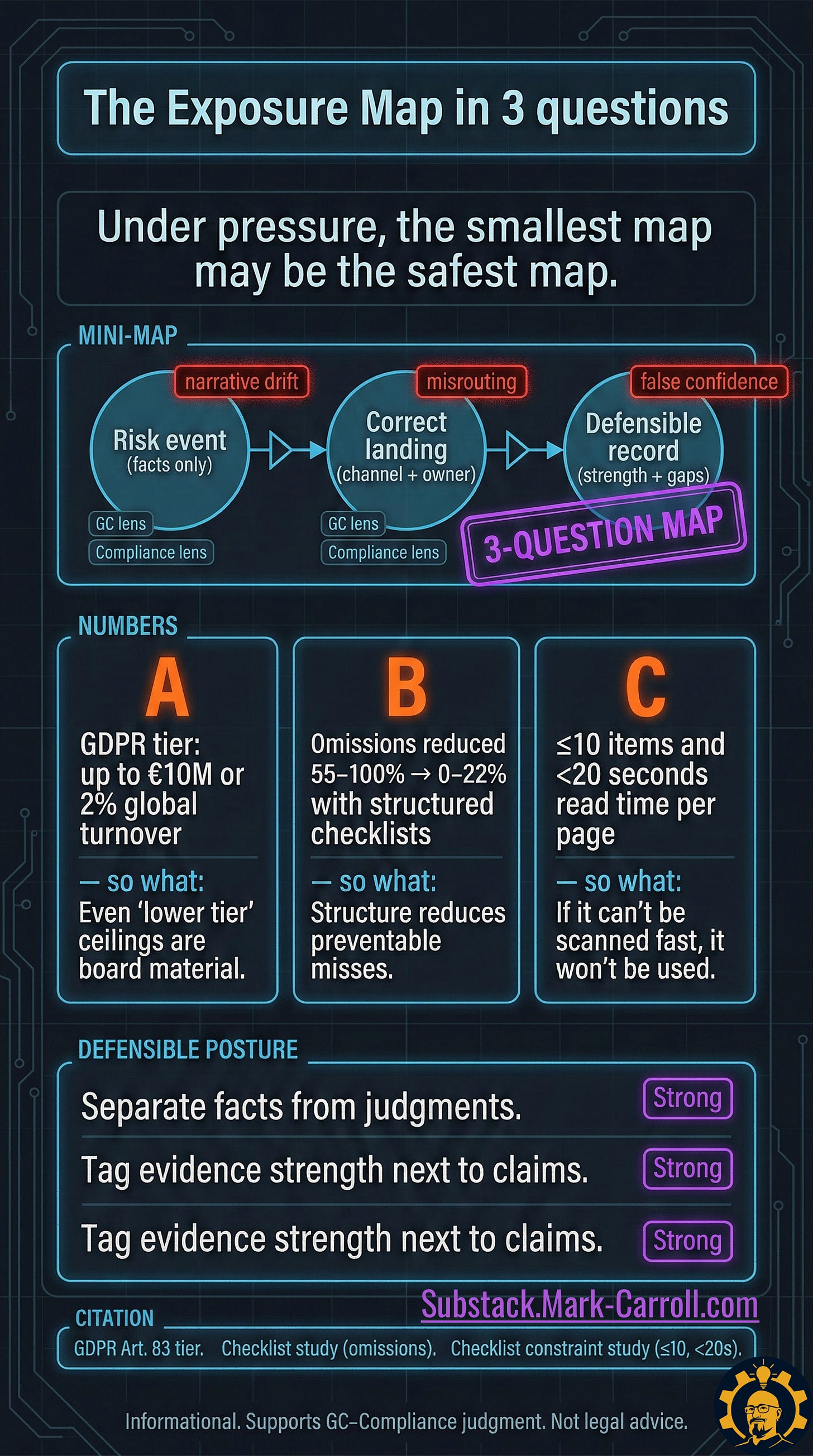

Two routes exist on paper. Internal and protected. The gap between paper and practice is where the real cost accumulates.

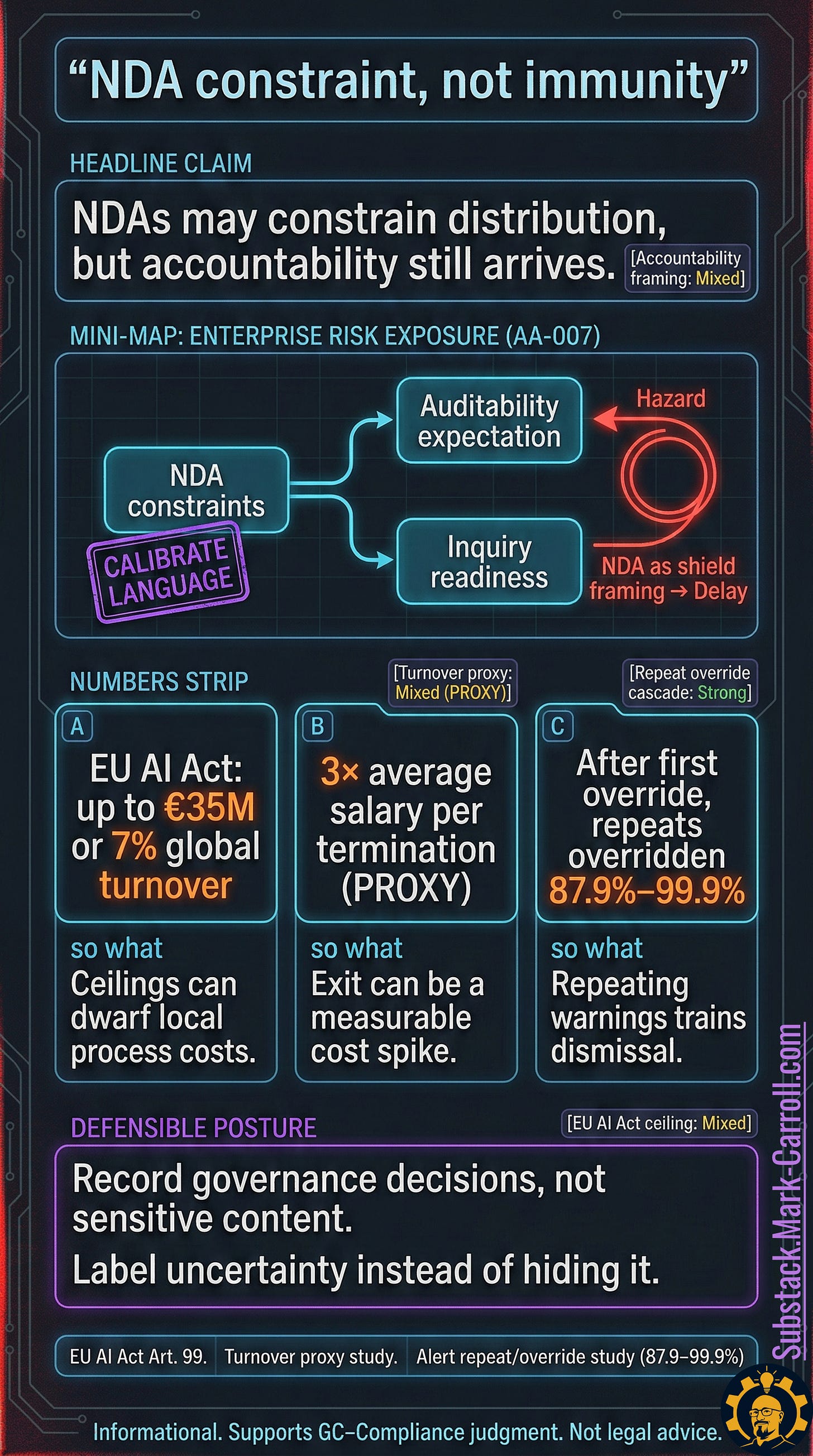

NDA constraints narrow what can be shared and who can see it, which slows triage and weakens the record.

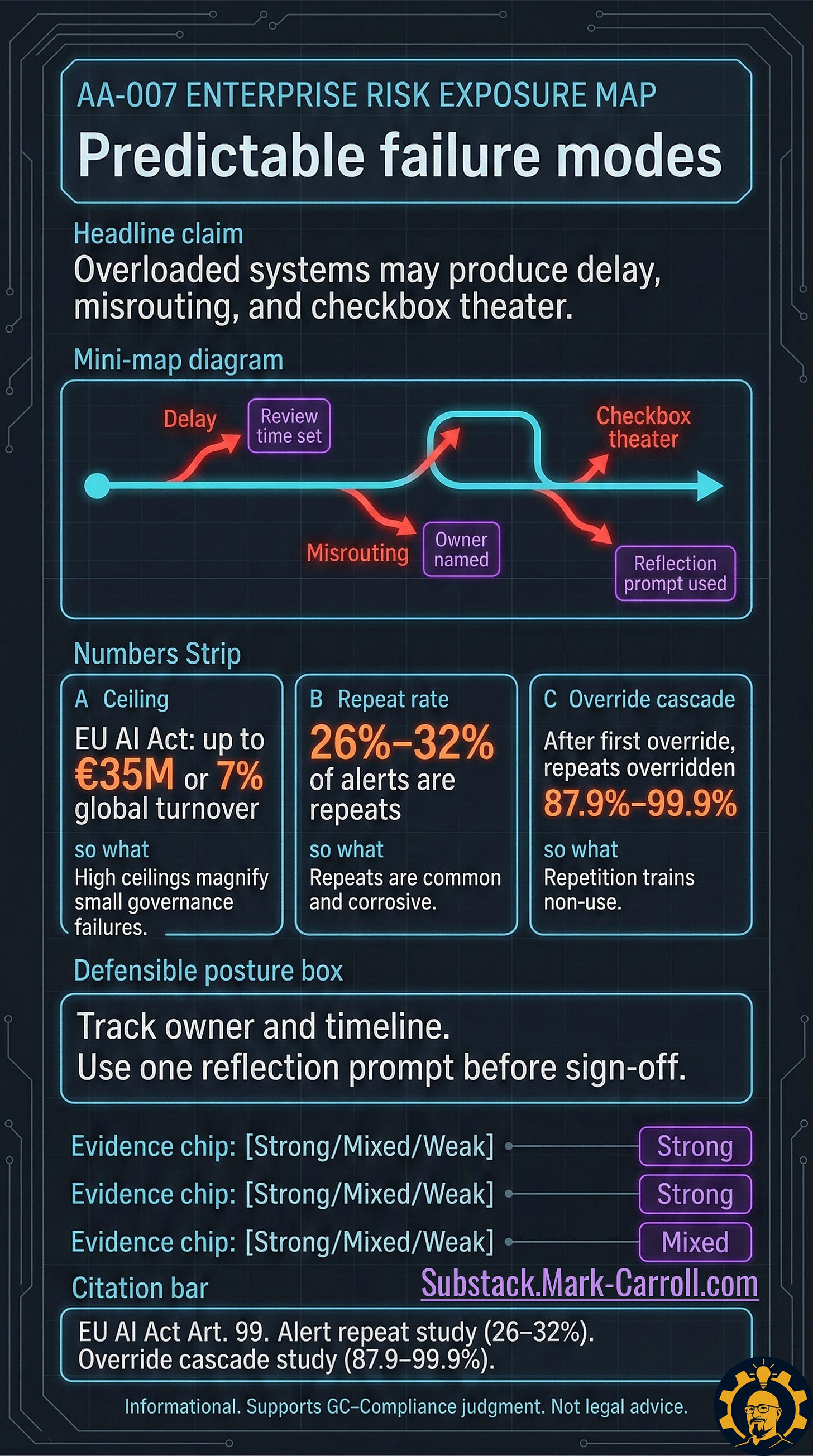

Time pressure turns reporting channels into bottlenecks. Delay. Misrouting. Checkbox theater.

A soft record makes everything downstream harder. Investigation, remediation, governance, and trust all pay interest.

Costs show up late. The bill arrives after escalation, not at the moment the warning was raised. That is the trap.

The example is not hypothetical. An AI model starts generating customer-facing commitments. Someone flags it. The discussion stays verbal because “we are still figuring it out.” Two weeks later a customer escalates with screenshots. The question is no longer what happened. The question is why the system had no memory.

The incentive behind it: most organizations reward smooth operations. So the process quietly evolves to delay bad news until it becomes too expensive to ignore. Not malice. Optimization. Same result.